15 KiB

| stage | group | info |

|---|---|---|

| Configure | Configure | To determine the technical writer assigned to the Stage/Group associated with this page, see https://about.gitlab.com/handbook/engineering/ux/technical-writing/#assignments |

Getting started with Auto DevOps (FREE)

This step-by-step guide helps you use Auto DevOps to deploy a project hosted on GitLab.com to Google Kubernetes Engine.

You are using the GitLab native Kubernetes integration, so you don't need to create a Kubernetes cluster manually using the Google Cloud Platform console. You are creating and deploying a simple application that you create from a GitLab template.

These instructions also work for a self-managed GitLab instance; ensure your own runners are configured and Google OAuth is enabled.

Configure your Google account

Before creating and connecting your Kubernetes cluster to your GitLab project, you need a Google Cloud Platform account. Sign in with an existing Google account, such as the one you use to access Gmail or Google Drive, or create a new one.

- Follow the steps described in the "Before you begin" section of the Kubernetes Engine documentation to enable the required APIs and related services.

- Ensure you've created a billing account with Google Cloud Platform.

NOTE: Every new Google Cloud Platform (GCP) account receives $300 in credit, and in partnership with Google, GitLab is able to offer an additional $200 for new GCP accounts to get started with the GitLab integration with Google Kubernetes Engine. Follow this link and apply for credit.

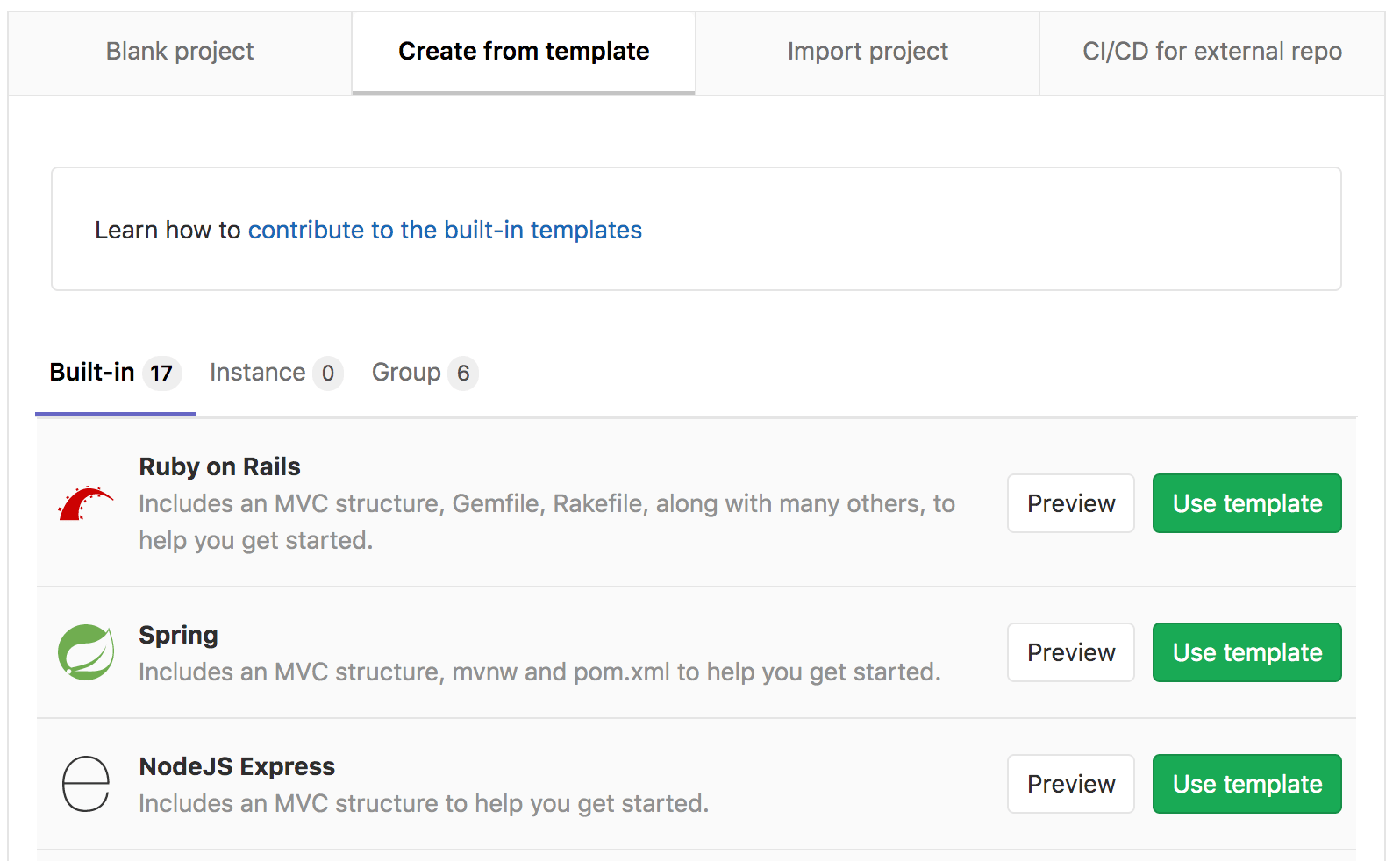

Create a new project from a template

We are using a GitLab project template to get started. As the name suggests, those projects provide a bare-bones application built on some well-known frameworks.

-

In GitLab, click the plus icon ({plus-square}) at the top of the navigation bar, and select New project.

-

Go to the Create from template tab, where you can choose among a Ruby on Rails, Spring, or NodeJS Express project. For this tutorial, use the Ruby on Rails template.

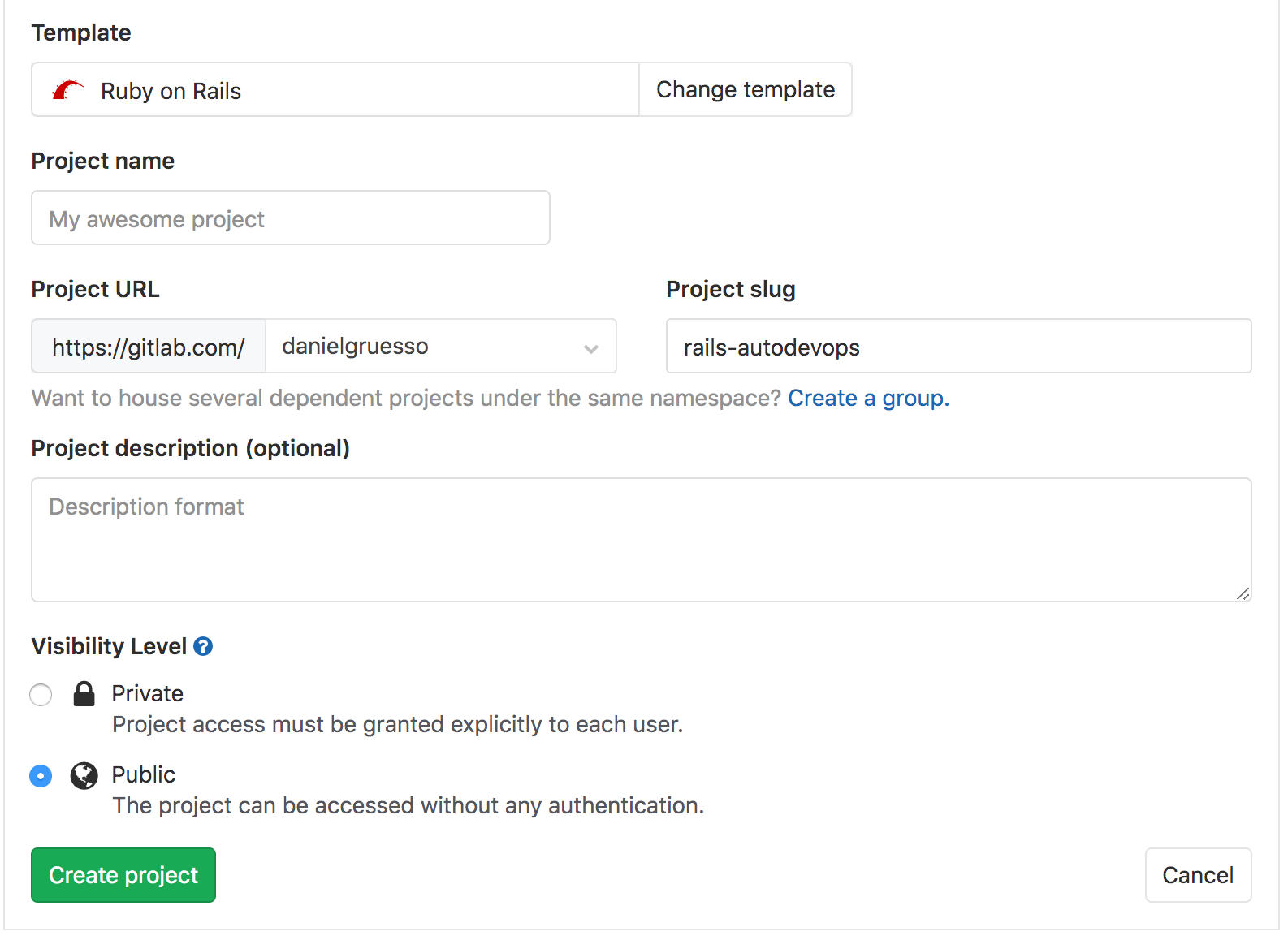

-

Give your project a name, optionally a description, and make it public so that you can take advantage of the features available in the GitLab Ultimate plan.

-

Click Create project.

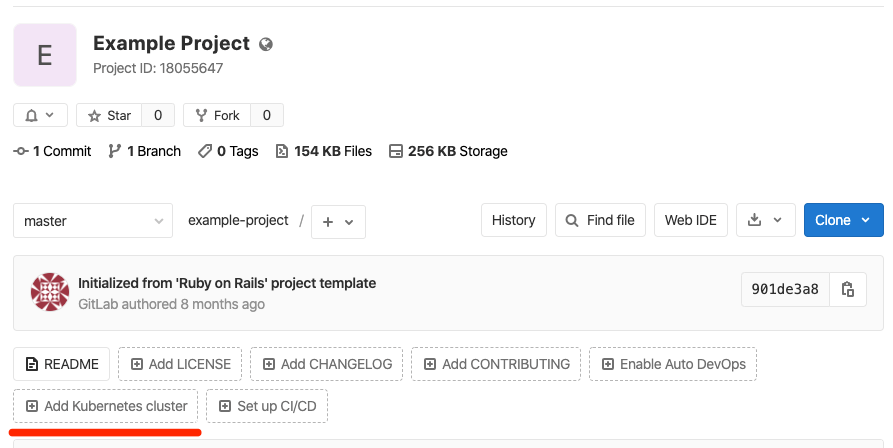

Now that you've created a project, create the Kubernetes cluster to deploy this project to.

Create a Kubernetes cluster from within GitLab

-

On your project's landing page, click Add Kubernetes cluster (note that this option is also available when you navigate to Operations > Kubernetes).

-

On the Add a Kubernetes cluster integration page, click the Create new cluster tab, then click Google GKE.

-

Connect with your Google account, and click Allow to allow access to your Google account. (This authorization request is only displayed the first time you connect GitLab with your Google account.)

After authorizing access, the Add a Kubernetes cluster integration page is displayed.

-

In the Enter the details for your Kubernetes cluster section, provide details about your cluster:

- Kubernetes cluster name

- Environment scope - Leave this field unchanged.

- Google Cloud Platform project - Select a project. When you configured your Google account, a project should have already been created for you.

- Zone - The region/zone to create the cluster in.

- Number of nodes

- Machine type - For more information about machine types, see Google's documentation.

- Enable Cloud Run for Anthos - Select this checkbox to use the Cloud Run, Istio, and HTTP Load Balancing add-ons for this cluster.

- GitLab-managed cluster - Select this checkbox to allow GitLab to manage namespace and service accounts for this cluster.

-

Click Create Kubernetes cluster.

After a couple of minutes, the cluster is created. You can also see its status on your GCP dashboard.

Install Ingress

After your cluster is running, you must install NGINX Ingress Controller as a load balancer, to route traffic from the internet to your application. Because you've created a Google GKE cluster in this guide, you can install NGINX Ingress Controller with Google Cloud Shell:

-

Go to your cluster's details page, and click the Advanced Settings tab.

-

Click the link to Google Kubernetes Engine to visit the cluster on Google Cloud Console.

-

On the GKE cluster page, select Connect, then click Run in Cloud Shell.

-

After the Cloud Shell starts, run these commands to install NGINX Ingress Controller:

helm repo add nginx-stable https://helm.nginx.com/stable helm repo update helm install nginx-ingress nginx-stable/nginx-ingress # Check that the ingress controller is installed successfully kubectl get service nginx-ingress-nginx-ingress -

A few minutes after you install NGINX, the load balancer obtains an IP address, and you can get the external IP address with this command:

kubectl get service nginx-ingress-nginx-ingress -ojson | jq -r '.status.loadBalancer.ingress[].ip'Copy this IP address, as you need it in the next step.

-

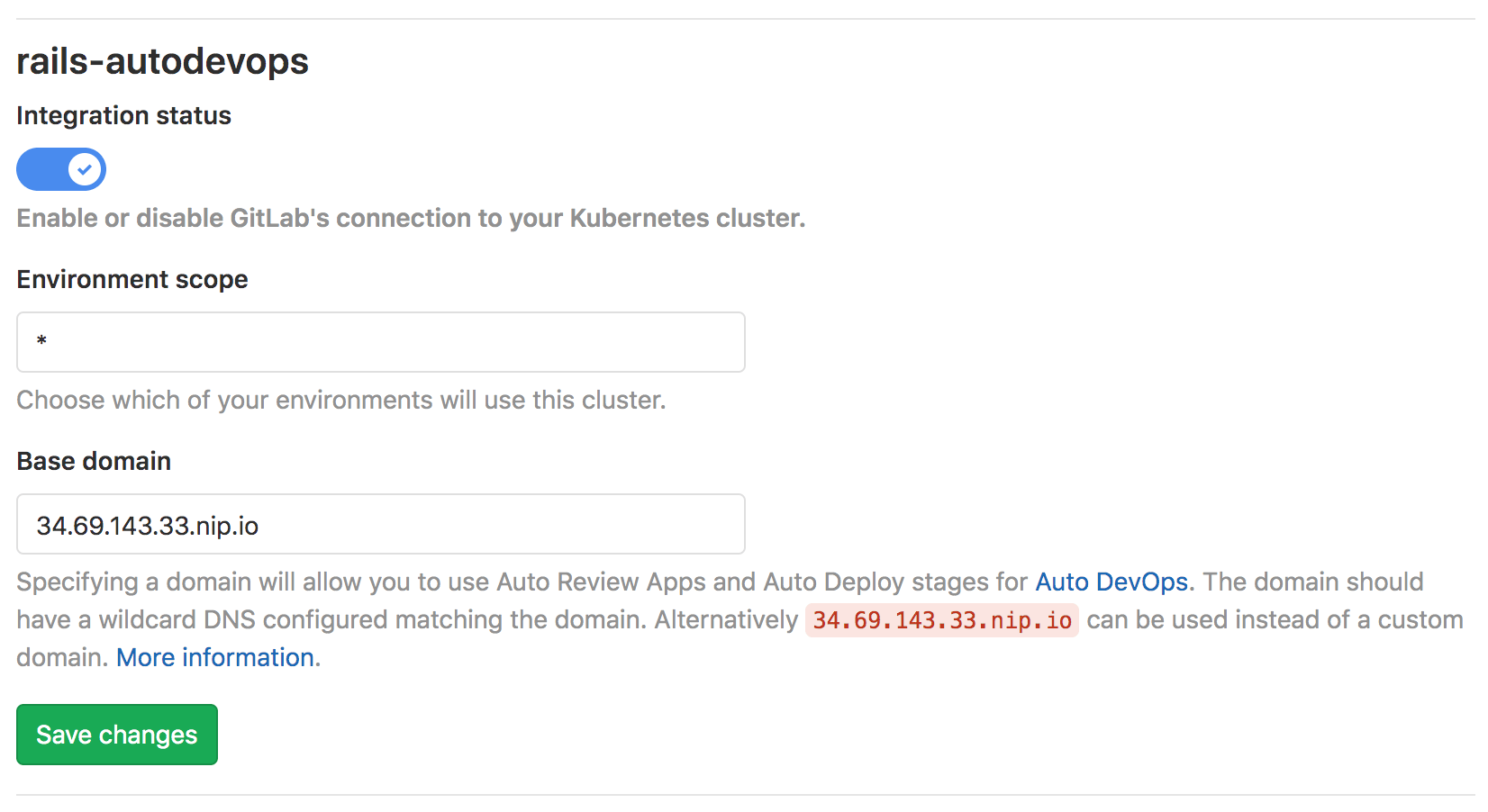

Go back to the cluster page on GitLab, and go to the Details tab.

- Add your Base domain. For this guide, use the domain

<IP address>.nip.io. - Click Save changes.

- Add your Base domain. For this guide, use the domain

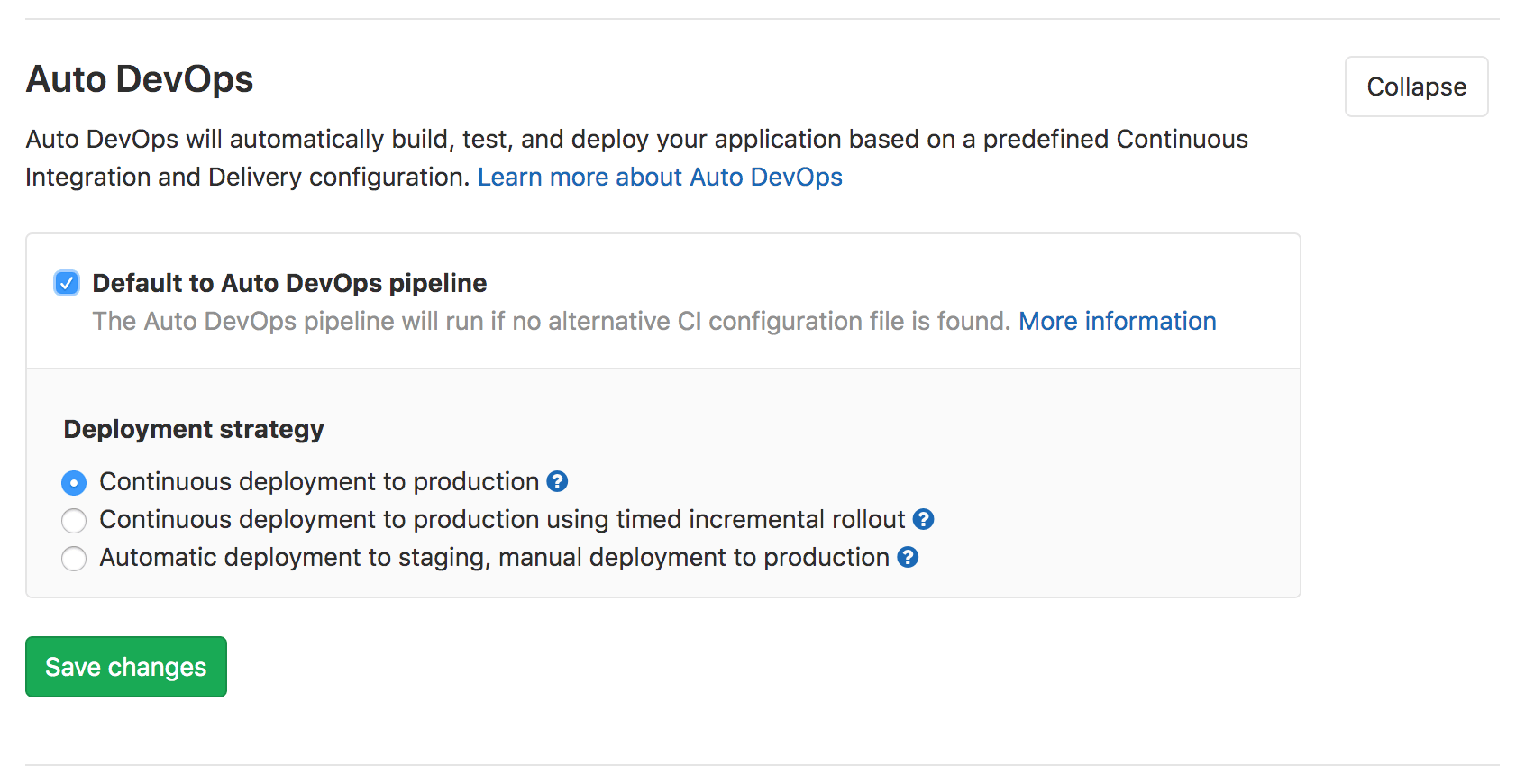

Enable Auto DevOps (optional)

While Auto DevOps is enabled by default, Auto DevOps can be disabled at both the instance level (for self-managed instances) and the group level. Complete these steps to enable Auto DevOps if it's disabled:

-

Navigate to Settings > CI/CD > Auto DevOps, and click Expand.

-

Select Default to Auto DevOps pipeline to display more options.

-

In Deployment strategy, select your desired continuous deployment strategy to deploy the application to production after the pipeline successfully runs on the

masterbranch. -

Click Save changes.

After you save your changes, GitLab creates a new pipeline. To view it, go to {rocket} CI/CD > Pipelines.

In the next section, we explain what each job does in the pipeline.

Deploy the application

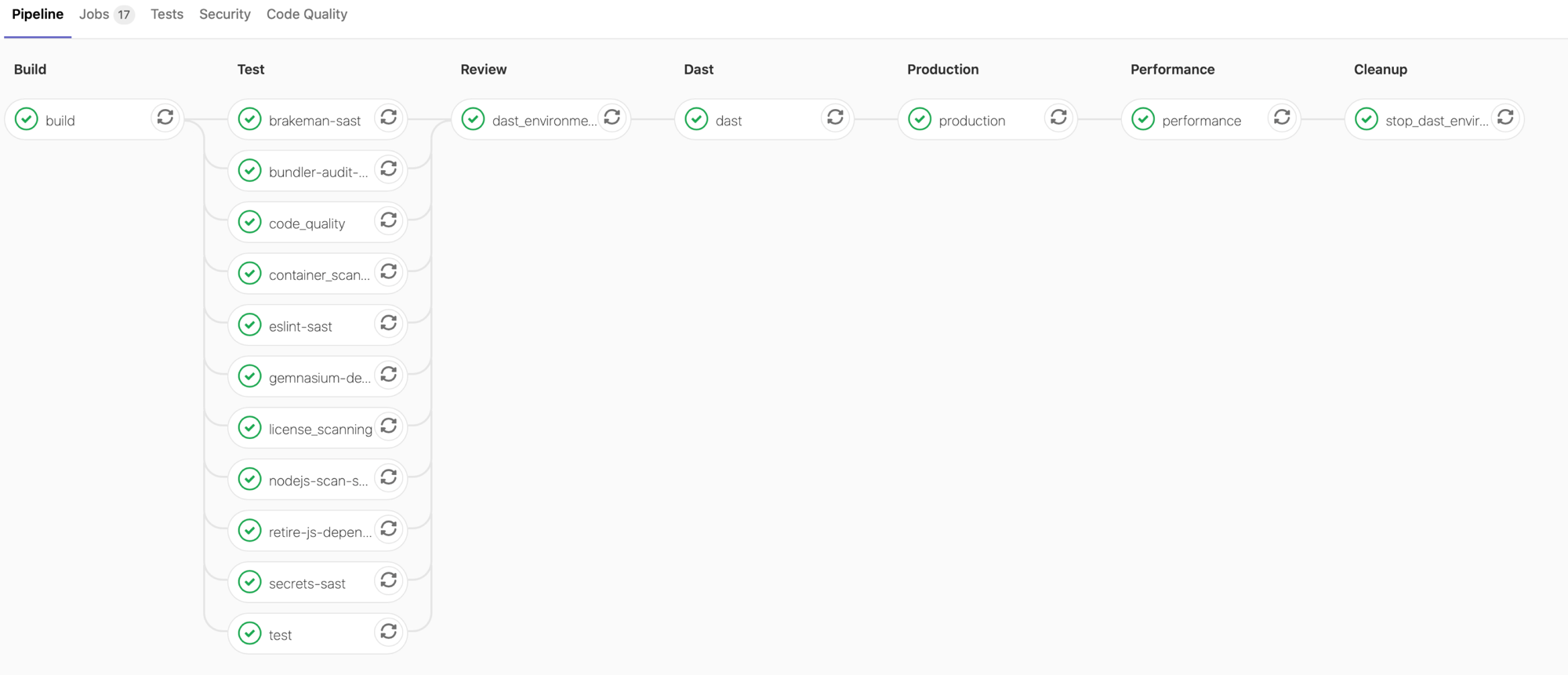

When your pipeline runs, what is it doing?

To view the jobs in the pipeline, click the pipeline's status badge. The {status_running} icon displays when pipeline jobs are running, and updates without refreshing the page to {status_success} (for success) or {status_failed} (for failure) when the jobs complete.

The jobs are separated into stages:

-

Build - The application builds a Docker image and uploads it to your project's Container Registry (Auto Build).

-

Test - GitLab runs various checks on the application, but all jobs except

testare allowed to fail in the test stage:- The

testjob runs unit and integration tests by detecting the language and framework (Auto Test) - The

code_qualityjob checks the code quality and is allowed to fail (Auto Code Quality) - The

container_scanningjob checks the Docker container if it has any vulnerabilities and is allowed to fail (Auto Container Scanning) - The

dependency_scanningjob checks if the application has any dependencies susceptible to vulnerabilities and is allowed to fail (Auto Dependency Scanning) (ULTIMATE) - Jobs suffixed with

-sastrun static analysis on the current code to check for potential security issues, and are allowed to fail (Auto SAST) (ULTIMATE) - The

secret-detectionjob checks for leaked secrets and is allowed to fail (Auto Secret Detection) (ULTIMATE) - The

license_managementjob searches the application's dependencies to determine each of their licenses and is allowed to fail (Auto License Compliance) (ULTIMATE)

- The

-

Review - Pipelines on

masterinclude this stage with adast_environment_deployjob. To learn more, see Dynamic Application Security Testing (DAST). -

Production - After the tests and checks finish, the application deploys in Kubernetes (Auto Deploy).

-

Performance - Performance tests are run on the deployed application (Auto Browser Performance Testing). (PREMIUM)

-

Cleanup - Pipelines on

masterinclude this stage with astop_dast_environmentjob.

After running a pipeline, you should view your deployed website and learn how to monitor it.

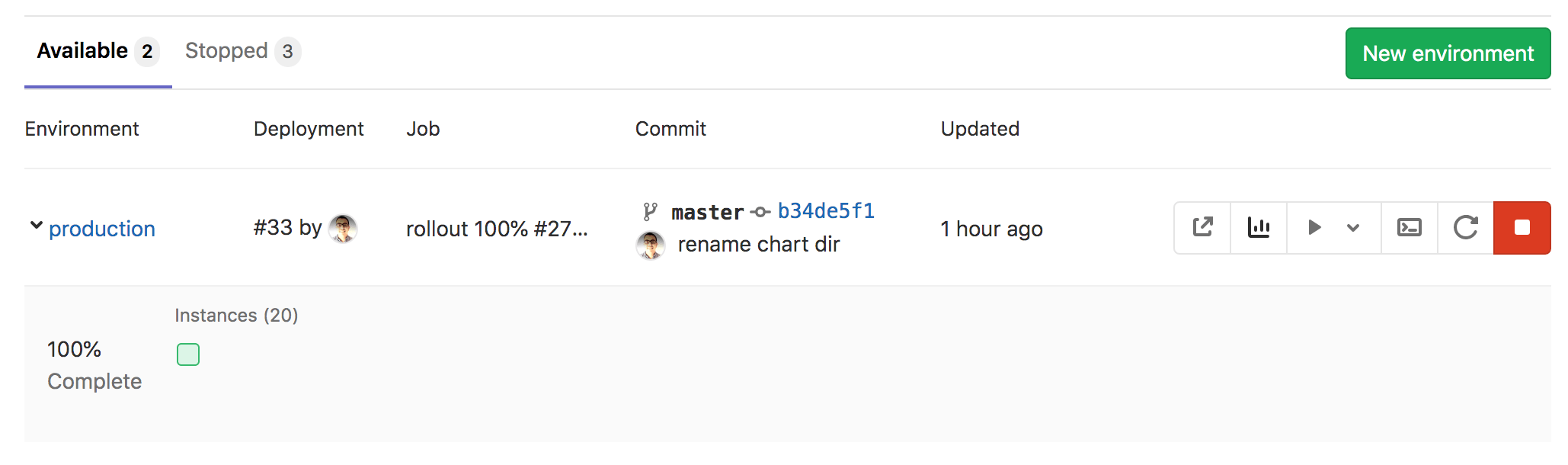

Monitor your project

After successfully deploying your application, you can view its website and check on its health on the Environments page by navigating to Operations > Environments. This page displays details about the deployed applications, and the right-hand column displays icons that link you to common environment tasks:

- Open live environment ({external-link}) - Opens the URL of the application deployed in production

- Monitoring ({chart}) - Opens the metrics page where Prometheus collects data about the Kubernetes cluster and how the application affects it in terms of memory usage, CPU usage, and latency

- Deploy to ({play} {angle-down}) - Displays a list of environments you can deploy to

- Terminal ({terminal}) - Opens a web terminal session inside the container where the application is running

- Re-deploy to environment ({repeat}) - For more information, see Retrying and rolling back

- Stop environment ({stop}) - For more information, see Stopping an environment

GitLab displays the Deploy Board below the environment's information, with squares representing pods in your Kubernetes cluster, color-coded to show their status. Hovering over a square on the deploy board displays the state of the deployment, and clicking the square takes you to the pod's logs page.

NOTE:

The example shows only one pod hosting the application at the moment, but you can add

more pods by defining the REPLICAS variable

in Settings > CI/CD > Environment variables.

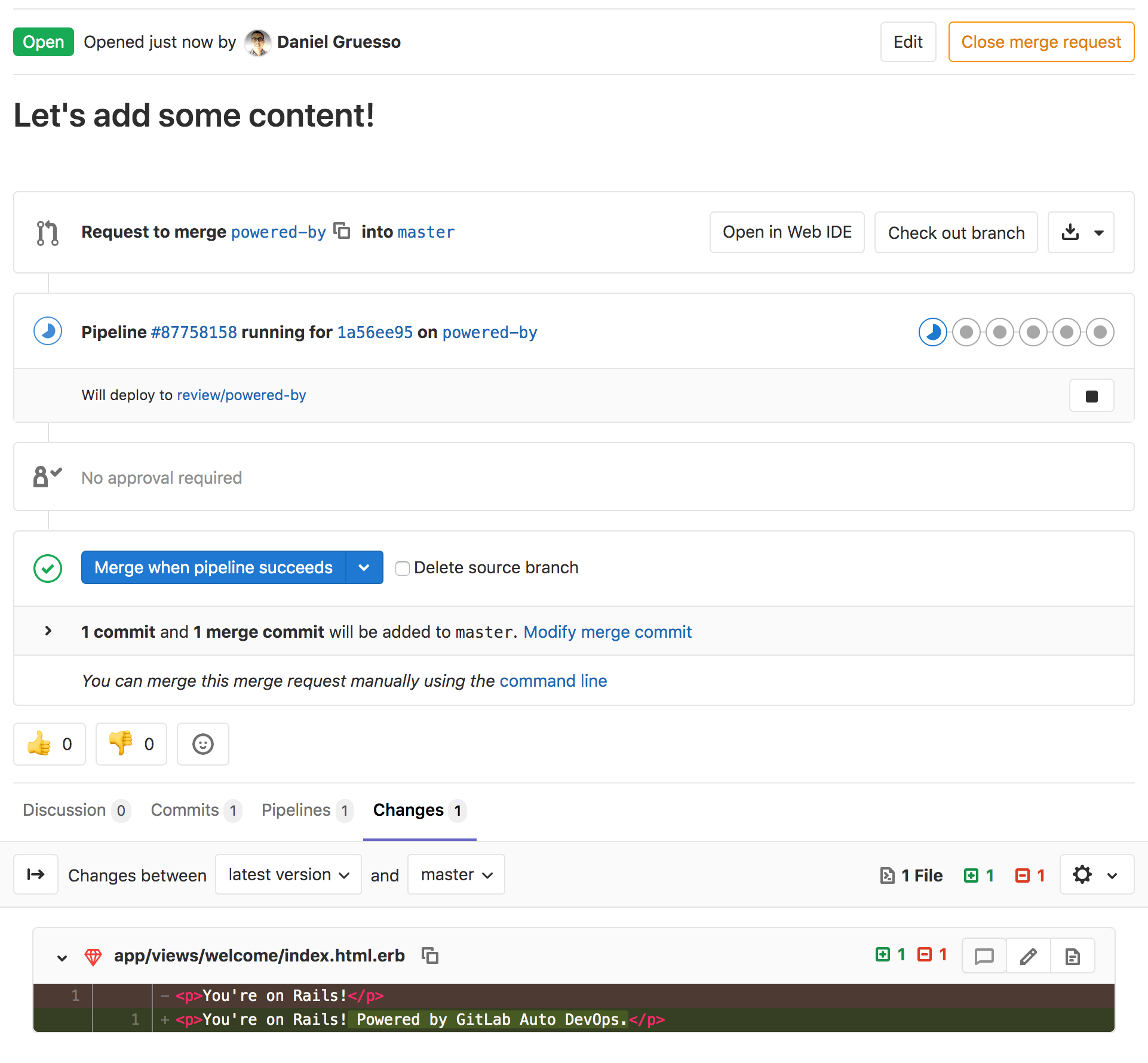

Work with branches

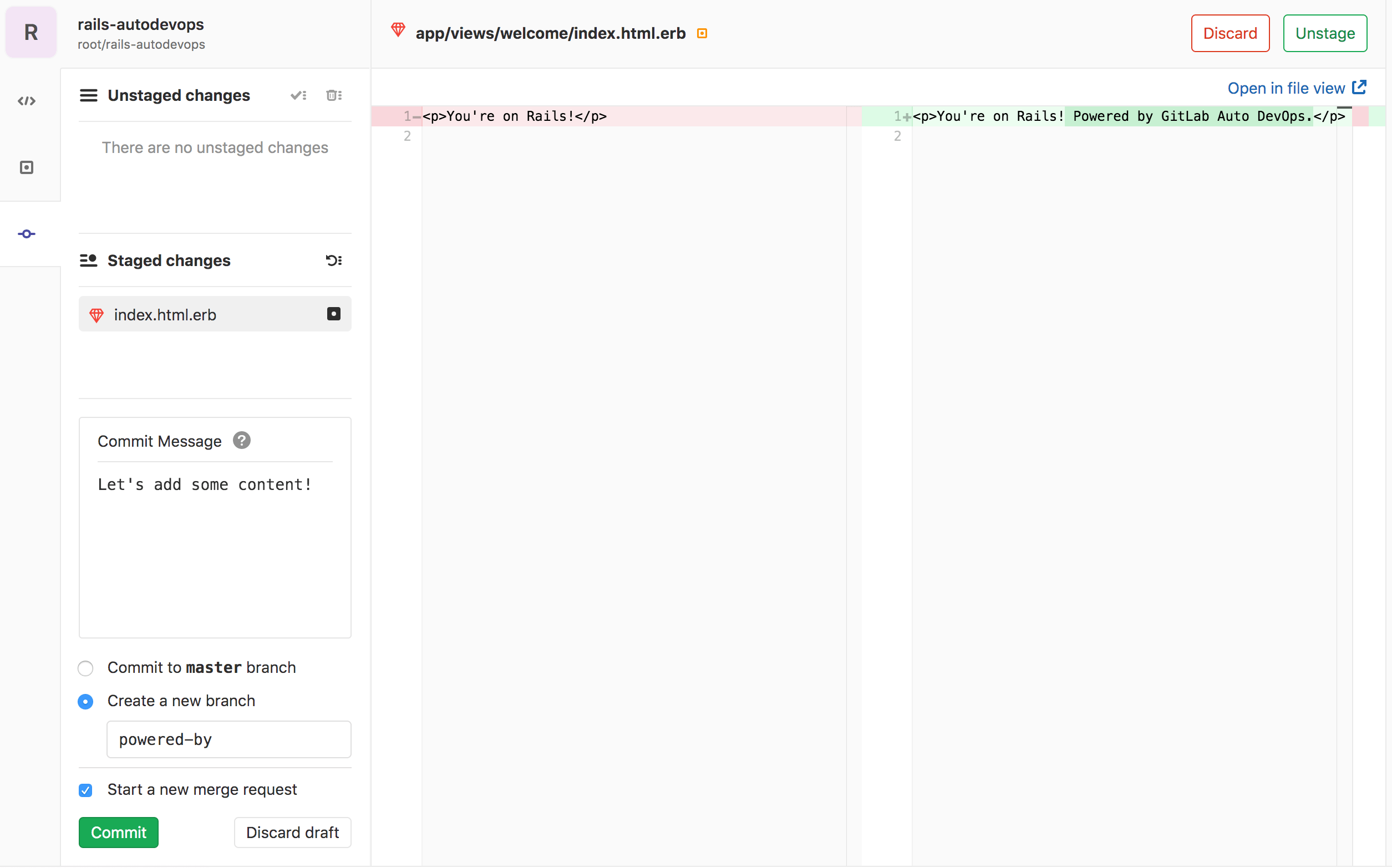

Following the GitLab flow, you should next create a feature branch to add content to your application:

-

In your project's repository, navigate to the following file:

app/views/welcome/index.html.erb. This file should only contain a paragraph:<p>You're on Rails!</p>. -

Open the GitLab Web IDE to make the change.

-

Edit the file so it contains:

<p>You're on Rails! Powered by GitLab Auto DevOps.</p> -

Stage the file. Add a commit message, then create a new branch and a merge request by clicking Commit.

After submitting the merge request, GitLab runs your pipeline, and all the jobs

in it, as described previously, in addition to

a few more that run only on branches other than master.

After a few minutes a test fails, which means a test was

'broken' by your change. Click on the failed test job to see more information

about it:

Failure:

WelcomeControllerTest#test_should_get_index [/app/test/controllers/welcome_controller_test.rb:7]:

<You're on Rails!> expected but was

<You're on Rails! Powered by GitLab Auto DevOps.>..

Expected 0 to be >= 1.

bin/rails test test/controllers/welcome_controller_test.rb:4

To fix the broken test:

- Return to the Overview page for your merge request, and click Open in Web IDE.

- In the left-hand directory of files, find the

test/controllers/welcome_controller_test.rbfile, and click it to open it. - Change line 7 to say

You're on Rails! Powered by GitLab Auto DevOps. - Click Commit.

- In the left-hand column, under Unstaged changes, click the checkmark icon ({stage-all}) to stage the changes.

- Write a commit message, and click Commit.

Return to the Overview page of your merge request, and you should not only see the test passing, but also the application deployed as a review application. You can visit it by clicking the View app {external-link} button to see your changes deployed.

After merging the merge request, GitLab runs the pipeline on the master branch,

and then deploys the application to production.

Conclusion

After implementing this project, you should have a solid understanding of the basics of Auto DevOps. You started from building and testing, to deploying and monitoring an application all in GitLab. Despite its automatic nature, Auto DevOps can also be configured and customized to fit your workflow. Here are some helpful resources for further reading: