34 KiB

Adding and removing Kubernetes clusters

GitLab offers integrated cluster creation for the following Kubernetes providers:

- Google Kubernetes Engine (GKE).

- Amazon Elastic Kubernetes Service (EKS).

In addition, GitLab can integrate with any standard Kubernetes provider, either on-premise or hosted.

TIP: Tip: Every new Google Cloud Platform (GCP) account receives $300 in credit upon sign up, and in partnership with Google, GitLab is able to offer an additional $200 for new GCP accounts to get started with GitLab's Google Kubernetes Engine Integration. All you have to do is follow this link and apply for credit.

Before you begin

Before adding a Kubernetes cluster using GitLab, you need:

- GitLab itself. Either:

- A GitLab.com account.

- A self-managed installation with GitLab version 12.5 or later. This will ensure the GitLab UI can be used for cluster creation.

- The following GitLab access:

- Maintainer access to a project for a project-level cluster.

- Maintainer access to a group for a group-level cluster.

- Admin Area access for a self-managed instance-level cluster. (CORE ONLY)

GKE requirements

Before creating your first cluster on Google GKE with GitLab's integration, make sure the following requirements are met:

- A billing account set up with access.

- The Kubernetes Engine API and related service are enabled. It should work immediately but may take up to 10 minutes after you create a project. For more information see the "Before you begin" section of the Kubernetes Engine docs.

EKS requirements

Before creating your first cluster on Amazon EKS with GitLab's integration, make sure the following requirements are met:

- An Amazon Web Services account is set up and you are able to log in.

- You have permissions to manage IAM resources.

- If you want to use an existing EKS cluster:

- An Amazon EKS cluster with worker nodes properly configured.

kubectlinstalled and configured for access to the EKS cluster.

Additional requirements for self-managed instances (CORE ONLY)

If you are using a self-managed GitLab instance, GitLab must first be configured with a set of Amazon credentials. These credentials will be used to assume an Amazon IAM role provided by the user creating the cluster. Create an IAM user and ensure it has permissions to assume the role(s) that your users will use to create EKS clusters.

For example, the following policy document allows assuming a role whose name starts with

gitlab-eks- in account 123456789012:

{

"Version": "2012-10-17",

"Statement": {

"Effect": "Allow",

"Action": "sts:AssumeRole",

"Resource": "arn:aws:iam::123456789012:role/gitlab-eks-*"

}

}

Generate an access key for the IAM user, and configure GitLab with the credentials:

- Navigate to Admin Area > Settings > Integrations and expand the Amazon EKS section.

- Check Enable Amazon EKS integration.

- Enter the account ID and access key credentials into the respective

Account ID,Access key IDandSecret access keyfields. - Click Save changes.

Access controls

When creating a cluster in GitLab, you will be asked if you would like to create either:

- A Role-based access control (RBAC) cluster.

- An Attribute-based access control (ABAC) cluster.

NOTE: Note: RBAC is recommended and the GitLab default.

GitLab creates the necessary service accounts and privileges to install and run

GitLab managed applications. When GitLab creates the cluster,

a gitlab service account with cluster-admin privileges is created in the default namespace

to manage the newly created cluster.

NOTE: Note: Restricted service account for deployment was introduced in GitLab 11.5.

When you install Helm into your cluster, the tiller service account

is created with cluster-admin privileges in the gitlab-managed-apps

namespace.

This service account will be:

- Added to the installed Helm Tiller.

- Used by Helm to install and run GitLab managed applications.

Helm will also create additional service accounts and other resources for each installed application. Consult the documentation of the Helm charts for each application for details.

If you are adding an existing Kubernetes cluster, ensure the token of the account has administrator privileges for the cluster.

The resources created by GitLab differ depending on the type of cluster.

Important notes

Note the following about access controls:

- Environment-specific resources are only created if your cluster is managed by GitLab.

- If your cluster was created before GitLab 12.2, it will use a single namespace for all project environments.

RBAC cluster resources

GitLab creates the following resources for RBAC clusters.

| Name | Type | Details | Created when |

|---|---|---|---|

gitlab |

ServiceAccount |

default namespace |

Creating a new cluster |

gitlab-admin |

ClusterRoleBinding |

cluster-admin roleRef |

Creating a new cluster |

gitlab-token |

Secret |

Token for gitlab ServiceAccount |

Creating a new cluster |

tiller |

ServiceAccount |

gitlab-managed-apps namespace |

Installing Helm Tiller |

tiller-admin |

ClusterRoleBinding |

cluster-admin roleRef |

Installing Helm Tiller |

| Environment namespace | Namespace |

Contains all environment-specific resources | Deploying to a cluster |

| Environment namespace | ServiceAccount |

Uses namespace of environment | Deploying to a cluster |

| Environment namespace | Secret |

Token for environment ServiceAccount | Deploying to a cluster |

| Environment namespace | RoleBinding |

edit roleRef |

Deploying to a cluster |

ABAC cluster resources

GitLab creates the following resources for ABAC clusters.

| Name | Type | Details | Created when |

|---|---|---|---|

gitlab |

ServiceAccount |

default namespace |

Creating a new cluster |

gitlab-token |

Secret |

Token for gitlab ServiceAccount |

Creating a new cluster |

tiller |

ServiceAccount |

gitlab-managed-apps namespace |

Installing Helm Tiller |

tiller-admin |

ClusterRoleBinding |

cluster-admin roleRef |

Installing Helm Tiller |

| Environment namespace | Namespace |

Contains all environment-specific resources | Deploying to a cluster |

| Environment namespace | ServiceAccount |

Uses namespace of environment | Deploying to a cluster |

| Environment namespace | Secret |

Token for environment ServiceAccount | Deploying to a cluster |

Security of GitLab Runners

GitLab Runners have the privileged mode enabled by default, which allows them to execute special commands and running Docker in Docker. This functionality is needed to run some of the Auto DevOps jobs. This implies the containers are running in privileged mode and you should, therefore, be aware of some important details.

The privileged flag gives all capabilities to the running container, which in

turn can do almost everything that the host can do. Be aware of the

inherent security risk associated with performing docker run operations on

arbitrary images as they effectively have root access.

If you don't want to use GitLab Runner in privileged mode, either:

- Use shared Runners on GitLab.com. They don't have this security issue.

- Set up your own Runners using configuration described at

Shared Runners. This involves:

- Making sure that you don't have it installed via the applications.

- Installing a Runner

using

docker+machine.

Add new cluster

New clusters can be added using GitLab for:

- Google Kubernetes Engine.

- Amazon Elastic Kubernetes Service.

New GKE cluster

Starting from GitLab 12.4, all the GKE clusters provisioned by GitLab are VPC-native.

Important notes

Note the following:

- The Google authentication integration must be enabled in GitLab at the instance level. If that's not the case, ask your GitLab administrator to enable it. On GitLab.com, this is enabled.

- Starting from GitLab 12.1, all GKE clusters created by GitLab are RBAC-enabled. Take a look at the RBAC section for more information.

- Starting from GitLab 12.5, the cluster's pod address IP range will be set to /16 instead of the regular /14. /16 is a CIDR notation.

- GitLab requires basic authentication enabled and a client certificate issued for the cluster to set up an initial service account. Starting from GitLab 11.10, the cluster creation process will explicitly request that basic authentication and client certificate is enabled.

Creating the cluster on GKE

To create and add a new Kubernetes cluster to your project, group, or instance:

- Navigate to your:

- Project's Operations > Kubernetes page, for a project-level cluster.

- Group's Kubernetes page, for a group-level cluster.

- Admin Area > Kubernetes page, for an instance-level cluster.

- Click Add Kubernetes cluster.

- Under the Create new cluster tab, click Google GKE.

- Connect your Google account if you haven't done already by clicking the Sign in with Google button.

- Choose your cluster's settings:

- Kubernetes cluster name - The name you wish to give the cluster.

- Environment scope - The associated environment to this cluster.

- Google Cloud Platform project - Choose the project you created in your GCP console that will host the Kubernetes cluster. Learn more about Google Cloud Platform projects.

- Zone - Choose the region zone under which the cluster will be created.

- Number of nodes - Enter the number of nodes you wish the cluster to have.

- Machine type - The machine type of the Virtual Machine instance that the cluster will be based on.

- Enable Cloud Run for Anthos - Check this if you want to use Cloud Run for Anthos for this cluster. See the Cloud Run for Anthos section for more information.

- GitLab-managed cluster - Leave this checked if you want GitLab to manage namespaces and service accounts for this cluster. See the Managed clusters section for more information.

- Finally, click the Create Kubernetes cluster button.

After a couple of minutes, your cluster will be ready to go. You can now proceed to install some pre-defined applications.

Cloud Run for Anthos

Introduced in GitLab 12.4.

You can choose to use Cloud Run for Anthos in place of installing Knative and Istio separately after the cluster has been created. This means that Cloud Run (Knative), Istio, and HTTP Load Balancing will be enabled on the cluster at create time and cannot be installed or uninstalled separately.

New EKS cluster

Introduced in GitLab 12.5.

To create and add a new Kubernetes cluster to your project, group, or instance:

- Navigate to your:

- Project's Operations > Kubernetes page, for a project-level cluster.

- Group's Kubernetes page, for a group-level cluster.

- Admin Area > Kubernetes page, for an instance-level cluster.

- Click Add Kubernetes cluster.

- Under the Create new cluster tab, click Amazon EKS. You will be provided with an

Account IDandExternal IDto use in the next step. - In the IAM Management Console, create an IAM role:

-

From the left panel, select Roles.

-

Click Create role.

-

Under

Select type of trusted entity, select Another AWS account. -

Enter the Account ID from GitLab into the

Account IDfield. -

Check Require external ID.

-

Enter the External ID from GitLab into the

External IDfield. -

Click Next: Permissions.

-

Click Create Policy, which will open a new window.

-

Select the JSON tab, and paste in the following snippet in place of the existing content:

{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "autoscaling:CreateAutoScalingGroup", "autoscaling:DescribeAutoScalingGroups", "autoscaling:DescribeScalingActivities", "autoscaling:UpdateAutoScalingGroup", "autoscaling:CreateLaunchConfiguration", "autoscaling:DescribeLaunchConfigurations", "cloudformation:CreateStack", "cloudformation:DescribeStacks", "ec2:AuthorizeSecurityGroupEgress", "ec2:AuthorizeSecurityGroupIngress", "ec2:RevokeSecurityGroupEgress", "ec2:RevokeSecurityGroupIngress", "ec2:CreateSecurityGroup", "ec2:createTags", "ec2:DescribeImages", "ec2:DescribeKeyPairs", "ec2:DescribeRegions", "ec2:DescribeSecurityGroups", "ec2:DescribeSubnets", "ec2:DescribeVpcs", "eks:CreateCluster", "eks:DescribeCluster", "iam:AddRoleToInstanceProfile", "iam:AttachRolePolicy", "iam:CreateRole", "iam:CreateInstanceProfile", "iam:CreateServiceLinkedRole", "iam:GetRole", "iam:ListRoles", "iam:PassRole", "ssm:GetParameters" ], "Resource": "*" } ] }NOTE: Note: These permissions give GitLab the ability to create resources, but not delete them. This means that if an error is encountered during the creation process, changes will not be rolled back and you must remove resources manually. You can do this by deleting the relevant CloudFormation stack

-

Click Review policy.

-

Enter a suitable name for this policy, and click Create Policy. You can now close this window.

-

Switch back to the "Create role" window, and select the policy you just created.

-

Click Next: Tags, and optionally enter any tags you wish to associate with this role.

-

Click Next: Review.

-

Enter a role name and optional description into the fields provided.

-

Click Create role, the new role name will appear at the top. Click on its name and copy the

Role ARNfrom the newly created role.

-

- In GitLab, enter the copied role ARN into the

Role ARNfield. - Click Authenticate with AWS.

- Choose your cluster's settings:

- Kubernetes cluster name - The name you wish to give the cluster.

- Environment scope - The associated environment to this cluster.

- Kubernetes version - The Kubernetes version to use. Currently the only version supported is 1.14.

- Role name - Select the IAM role to allow Amazon EKS and the Kubernetes control plane to manage AWS resources on your behalf. This IAM role is separate to the IAM role created above, you will need to create it if it does not yet exist.

- Region - The region in which the cluster will be created.

- Key pair name - Select the key pair that you can use to connect to your worker nodes if required.

- VPC - Select a VPC to use for your EKS Cluster resources.

- Subnets - Choose the subnets in your VPC where your worker nodes will run.

- Security group - Choose the security group to apply to the EKS-managed Elastic Network Interfaces that are created in your worker node subnets.

- Instance type - The instance type of your worker nodes.

- Node count - The number of worker nodes.

- GitLab-managed cluster - Leave this checked if you want GitLab to manage namespaces and service accounts for this cluster. See the Managed clusters section for more information.

- Finally, click the Create Kubernetes cluster button.

After about 10 minutes, your cluster will be ready to go. You can now proceed to install some pre-defined applications.

NOTE: Note:

You will need to add your AWS external ID to the

IAM Role in the AWS CLI

to manage your cluster using kubectl.

Add existing cluster

If you have an existing Kubernetes cluster, you can add it to a project, group, or instance.

For more information, see information for adding an:

NOTE: Note: Kubernetes integration is not supported for arm64 clusters. See the issue Helm Tiller fails to install on arm64 cluster for details.

Existing Kubernetes cluster

To add a Kubernetes cluster to your project, group, or instance:

-

Navigate to your:

- Project's Operations > Kubernetes page, for a project-level cluster.

- Group's Kubernetes page, for a group-level cluster.

- Admin Area > Kubernetes page, for an instance-level cluster.

-

Click Add Kubernetes cluster.

-

Click the Add existing cluster tab and fill in the details:

-

Kubernetes cluster name (required) - The name you wish to give the cluster.

-

Environment scope (required) - The associated environment to this cluster.

-

API URL (required) - It's the URL that GitLab uses to access the Kubernetes API. Kubernetes exposes several APIs, we want the "base" URL that is common to all of them, e.g.,

https://kubernetes.example.comrather thanhttps://kubernetes.example.com/api/v1.Get the API URL by running this command:

kubectl cluster-info | grep 'Kubernetes master' | awk '/http/ {print $NF}' -

CA certificate (required) - A valid Kubernetes certificate is needed to authenticate to the cluster. We will use the certificate created by default.

-

List the secrets with

kubectl get secrets, and one should be named similar todefault-token-xxxxx. Copy that token name for use below. -

Get the certificate by running this command:

kubectl get secret <secret name> -o jsonpath="{['data']['ca\.crt']}" | base64 --decodeNOTE: Note: If the command returns the entire certificate chain, you need copy the root ca certificate at the bottom of the chain.

-

-

Token - GitLab authenticates against Kubernetes using service tokens, which are scoped to a particular

namespace. The token used should belong to a service account withcluster-adminprivileges. To create this service account:-

Create a file called

gitlab-admin-service-account.yamlwith contents:apiVersion: v1 kind: ServiceAccount metadata: name: gitlab-admin namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: gitlab-admin roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: gitlab-admin namespace: kube-system -

Apply the service account and cluster role binding to your cluster:

kubectl apply -f gitlab-admin-service-account.yamlYou will need the

container.clusterRoleBindings.createpermission to create cluster-level roles. If you do not have this permission, you can alternatively enable Basic Authentication and then run thekubectl applycommand as an admin:kubectl apply -f gitlab-admin-service-account.yaml --username=admin --password=<password>NOTE: Note: Basic Authentication can be turned on and the password credentials can be obtained using the Google Cloud Console.

Output:

serviceaccount "gitlab-admin" created clusterrolebinding "gitlab-admin" created -

Retrieve the token for the

gitlab-adminservice account:kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep gitlab-admin | awk '{print $1}')Copy the

<authentication_token>value from the output:Name: gitlab-admin-token-b5zv4 Namespace: kube-system Labels: <none> Annotations: kubernetes.io/service-account.name=gitlab-admin kubernetes.io/service-account.uid=bcfe66ac-39be-11e8-97e8-026dce96b6e8 Type: kubernetes.io/service-account-token Data ==== ca.crt: 1025 bytes namespace: 11 bytes token: <authentication_token>

NOTE: Note: For GKE clusters, you will need the

container.clusterRoleBindings.createpermission to create a cluster role binding. You can follow the Google Cloud documentation to grant access. -

-

GitLab-managed cluster - Leave this checked if you want GitLab to manage namespaces and service accounts for this cluster. See the Managed clusters section for more information.

-

Project namespace (optional) - You don't have to fill it in; by leaving it blank, GitLab will create one for you. Also:

- Each project should have a unique namespace.

- The project namespace is not necessarily the namespace of the secret, if

you're using a secret with broader permissions, like the secret from

default. - You should not use

defaultas the project namespace. - If you or someone created a secret specifically for the project, usually with limited permissions, the secret's namespace and project namespace may be the same.

-

-

Finally, click the Create Kubernetes cluster button.

After a couple of minutes, your cluster will be ready to go. You can now proceed to install some pre-defined applications.

Existing EKS cluster

To add an existing EKS cluster to your project, group, or instance:

- Perform the following steps on the EKS cluster:

-

Retrieve the certificate. A valid Kubernetes certificate is needed to authenticate to the EKS cluster. We will use the certificate created by default. Open a shell and use

kubectlto retrieve it:-

List the secrets with

kubectl get secrets, and one should named similar todefault-token-xxxxx. Copy that token name for use below. -

Get the certificate with:

kubectl get secret <secret name> -o jsonpath="{['data']['ca\.crt']}" | base64 --decode

-

-

Create admin token. A

cluster-admintoken is required to install and manage Helm Tiller. GitLab establishes mutual SSL authentication with Helm Tiller and creates limited service accounts for each application. To create the token we will create an admin service account as follows:-

Create a file called

eks-admin-service-account.yamlwith contents:apiVersion: v1 kind: ServiceAccount metadata: name: eks-admin namespace: kube-system -

Apply the service account to your cluster:

$ kubectl apply -f eks-admin-service-account.yaml serviceaccount "eks-admin" created -

Create a file called

eks-admin-cluster-role-binding.yamlwith contents:apiVersion: rbac.authorization.k8s.io/v1beta1 kind: ClusterRoleBinding metadata: name: eks-admin roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: cluster-admin subjects: - kind: ServiceAccount name: eks-admin namespace: kube-system -

Apply the cluster role binding to your cluster:

$ kubectl apply -f eks-admin-cluster-role-binding.yaml clusterrolebinding "eks-admin" created -

Retrieve the token for the

eks-adminservice account:kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep eks-admin | awk '{print $1}')Copy the

<authentication_token>value from the output:Name: eks-admin-token-b5zv4 Namespace: kube-system Labels: <none> Annotations: kubernetes.io/service-account.name=eks-admin kubernetes.io/service-account.uid=bcfe66ac-39be-11e8-97e8-026dce96b6e8 Type: kubernetes.io/service-account-token Data ==== ca.crt: 1025 bytes namespace: 11 bytes token: <authentication_token>

-

-

Locate the the API server endpoint so GitLab can connect to the cluster. This is displayed on the AWS EKS console, when viewing the EKS cluster details.

-

- Navigate to your:

- Project's Operations > Kubernetes page, for a project-level cluster.

- Group's Kubernetes page, for a group-level cluster.

- Admin Area > Kubernetes page, for an instance-level cluster.

- Click Add Kubernetes cluster.

- Click the Add existing cluster tab and fill in the details:

- Kubernetes cluster name: A name for the cluster to identify it within GitLab.

- Environment scope: Leave this as

*for now, since we are only connecting a single cluster. - API URL: The API server endpoint retrieved earlier.

- CA Certificate: The certificate data from the earlier step, as-is.

- Service Token: The admin token value.

- For project-level clusters, Project namespace prefix: This can be left blank to accept the default namespace, based on the project name.

- Click on Add Kubernetes cluster. The cluster is now connected to GitLab.

At this point, Kubernetes deployment variables will automatically be available during CI/CD jobs, making it easy to interact with the cluster.

If you would like to utilize your own CI/CD scripts to deploy to the cluster, you can stop here.

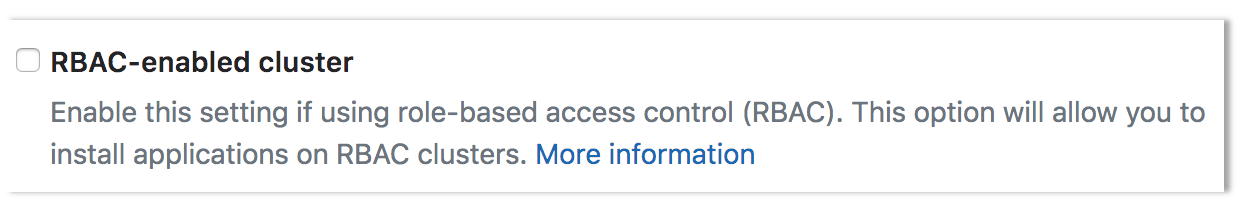

Disable Role-Based Access Control (RBAC) (optional)

When connecting a cluster via GitLab integration, you may specify whether the cluster is RBAC-enabled or not. This will affect how GitLab interacts with the cluster for certain operations. If you did not check the "RBAC-enabled cluster" checkbox at creation time, GitLab will assume RBAC is disabled for your cluster when interacting with it. If so, you must disable RBAC on your cluster for the integration to work properly.

NOTE: Note: Disabling RBAC means that any application running in the cluster, or user who can authenticate to the cluster, has full API access. This is a security concern, and may not be desirable.

To effectively disable RBAC, global permissions can be applied granting full access:

kubectl create clusterrolebinding permissive-binding \

--clusterrole=cluster-admin \

--user=admin \

--user=kubelet \

--group=system:serviceaccounts

Create a default Storage Class

Amazon EKS doesn't have a default Storage Class out of the box, which means requests for persistent volumes will not be automatically fulfilled. As part of Auto DevOps, the deployed Postgres instance requests persistent storage, and without a default storage class it will fail to start.

If a default Storage Class doesn't already exist and is desired, follow Amazon's guide on storage classes to create one.

Alternatively, disable Postgres by setting the project variable

POSTGRES_ENABLED to false.

Deploy the app to EKS

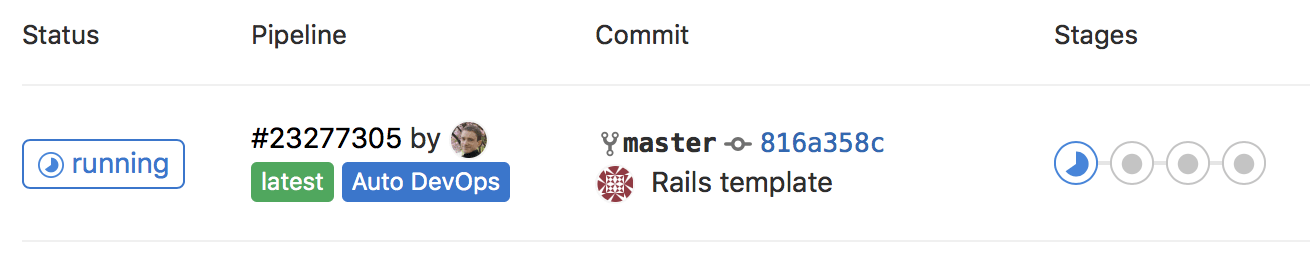

With RBAC disabled and services deployed, Auto DevOps can now be leveraged to build, test, and deploy the app.

Enable Auto DevOps

if not already enabled. If a wildcard DNS entry was created resolving to the

Load Balancer, enter it in the domain field under the Auto DevOps settings.

Otherwise, the deployed app will not be externally available outside of the cluster.

A new pipeline will automatically be created, which will begin to build, test, and deploy the app.

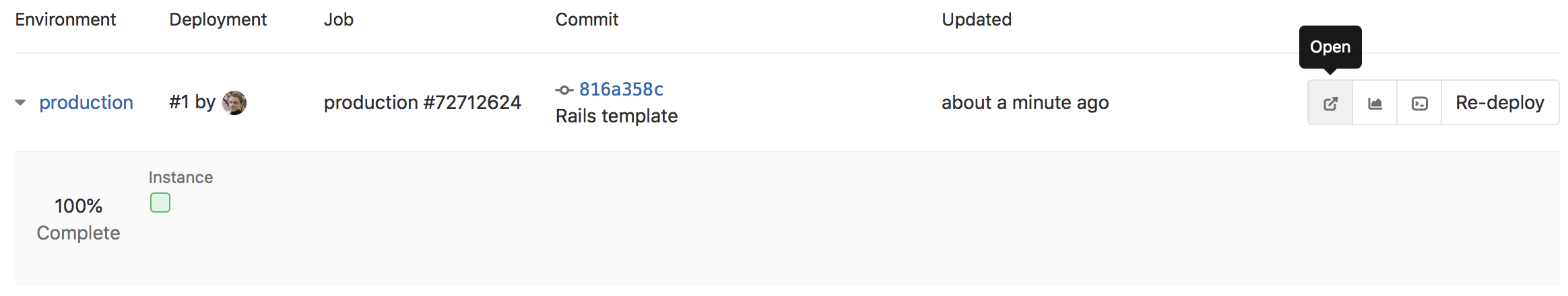

After the pipeline has finished, your app will be running in EKS and available to users. Click on CI/CD > Environments.

You will see a list of the environments and their deploy status, as well as options to browse to the app, view monitoring metrics, and even access a shell on the running pod.

Enabling or disabling integration

After you have successfully added your cluster information, you can enable the Kubernetes cluster integration:

- Click the Enabled/Disabled switch

- Hit Save for the changes to take effect

To disable the Kubernetes cluster integration, follow the same procedure.

Removing integration

To remove the Kubernetes cluster integration from your project, either:

- Select Remove integration, to remove only the Kubernetes integration.

- From GitLab 12.6, select Remove integration and resources, to also remove all related GitLab cluster resources (for example, namespaces, roles, and bindings) when removing the integration.

When removing the cluster integration, note:

- You need Maintainer permissions and above to remove a Kubernetes cluster integration.

- When you remove a cluster, you only remove its relationship to GitLab, not the cluster itself. To

remove the cluster, you can do so by visiting the GKE or EKS dashboard, or using

kubectl.

Learn more

To learn more on automatically deploying your applications, read about Auto DevOps.