38 KiB

| stage | group | info |

|---|---|---|

| Systems | Gitaly | To determine the technical writer assigned to the Stage/Group associated with this page, see https://about.gitlab.com/handbook/engineering/ux/technical-writing/#assignments |

Gitaly and Gitaly Cluster (FREE SELF)

Gitaly provides high-level RPC access to Git repositories. It is used by GitLab to read and write Git data.

Gitaly is present in every GitLab installation and coordinates Git repository storage and retrieval. Gitaly can be:

- A background service operating on a single instance Omnibus GitLab (all of GitLab on one machine).

- Separated onto its own instance and configured in a full cluster configuration, depending on scaling and availability requirements.

Gitaly implements a client-server architecture:

- A Gitaly server is any node that runs Gitaly itself.

- A Gitaly client is any node that runs a process that makes requests of the Gitaly server. Gitaly clients are also known as Gitaly consumers and include:

Gitaly manages only Git repository access for GitLab. Other types of GitLab data aren't accessed using Gitaly.

GitLab accesses repositories through the configured repository storages. Each new repository is stored on one of the repository storages based on their configured weights. Each repository storage is either:

- A Gitaly storage with direct access to repositories using storage paths, where each repository is stored on a single Gitaly node. All requests are routed to this node.

- A virtual storage provided by Gitaly Cluster, where each

repository can be stored on multiple Gitaly nodes for fault tolerance. In a Gitaly Cluster:

- Read requests are distributed between multiple Gitaly nodes, which can improve performance.

- Write requests are broadcast to repository replicas.

Before deploying Gitaly Cluster

Gitaly Cluster provides the benefits of fault tolerance, but comes with additional complexity of setup and management. Before deploying Gitaly Cluster, please review:

- Existing known issues.

- Snapshot limitations.

- Configuration guidance and Repository storage options to make sure that Gitaly Cluster is the best setup for you.

If you have:

- Not yet migrated to Gitaly Cluster and want to continue using NFS, remain on the service you are using. NFS is supported in 14.x releases but is deprecated. Support for storing Git repository data on NFS is scheduled to end for all versions of GitLab on November 22, 2022.

- Not yet migrated to Gitaly Cluster but want to migrate away from NFS, you have two options:

- A sharded Gitaly instance.

- Gitaly Cluster.

Contact your Technical Account Manager or customer support if you have any questions.

Known issues

The following table outlines current known issues impacting the use of Gitaly Cluster. For the current status of these issues, please refer to the referenced issues and epics.

| Issue | Summary | How to avoid |

|---|---|---|

| Gitaly Cluster + Geo - Issues retrying failed syncs | If Gitaly Cluster is used on a Geo secondary site, repositories that have failed to sync could continue to fail when Geo tries to resync them. Recovering from this state requires assistance from support to run manual steps. Work is in-progress to update Gitaly Cluster to identify repositories with a unique and persistent identifier, which is expected to resolve the issue. | No known solution at this time. |

| Praefect unable to insert data into the database due to migrations not being applied after an upgrade | If the database is not kept up to date with completed migrations, then the Praefect node is unable to perform normal operation. | Make sure the Praefect database is up and running with all migrations completed (For example: /opt/gitlab/embedded/bin/praefect -config /var/opt/gitlab/praefect/config.toml sql-migrate-status should show a list of all applied migrations). Consider requesting upgrade assistance so your upgrade plan can be reviewed by support. |

| Restoring a Gitaly Cluster node from a snapshot in a running cluster | Because the Gitaly Cluster runs with consistent state, introducing a single node that is behind will result in the cluster not being able to reconcile the nodes data and other nodes data | Don't restore a single Gitaly Cluster node from a backup snapshot. If you must restore from backup, it's best to snapshot all Gitaly Cluster nodes at the same time and take a database dump of the Praefect database. |

Snapshot backup and recovery limitations

Gitaly Cluster does not support snapshot backups. Snapshot backups can cause issues where the Praefect database becomes out of sync with the disk storage. Because of how Praefect rebuilds the replication metadata of Gitaly disk information during a restore, we recommend using the official backup and restore Rake tasks.

If you are unable to use this method, please contact customer support for restoration help.

We are tracking in this issue improvements to the official backup and restore Rake tasks to add support for incremental backups. For more information, see this epic.

What to do if you are on Gitaly Cluster experiencing an issue or limitation

Please contact customer support for immediate help in restoration or recovery.

Gitaly

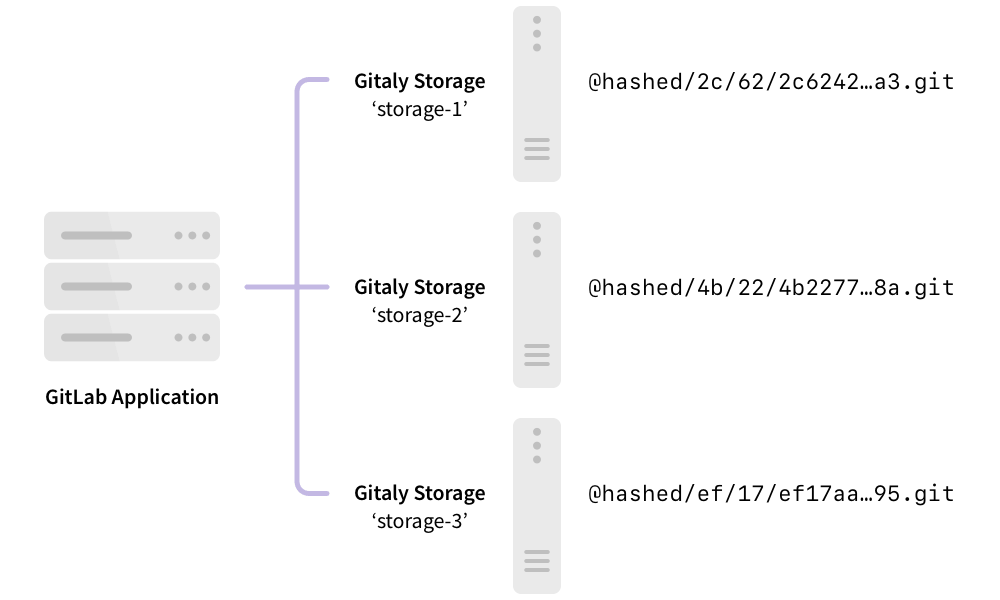

The following shows GitLab set up to use direct access to Gitaly:

In this example:

- Each repository is stored on one of three Gitaly storages:

storage-1,storage-2, orstorage-3. - Each storage is serviced by a Gitaly node.

- The three Gitaly nodes store data on their file systems.

Gitaly architecture

The following illustrates the Gitaly client-server architecture:

flowchart TD

subgraph Gitaly clients

A[GitLab Rails]

B[GitLab Workhorse]

C[GitLab Shell]

D[...]

end

subgraph Gitaly

E[Git integration]

end

F[Local filesystem]

A -- gRPC --> Gitaly

B -- gRPC--> Gitaly

C -- gRPC --> Gitaly

D -- gRPC --> Gitaly

E --> F

Configure Gitaly

Gitaly comes pre-configured with Omnibus GitLab, which is a configuration suitable for up to 1000 users. For:

- Omnibus GitLab installations for up to 2000 users, see specific Gitaly configuration instructions.

- Source installations or custom Gitaly installations, see Configure Gitaly.

GitLab installations for more than 2000 active users performing daily Git write operation may be best suited by using Gitaly Cluster.

Gitaly Cluster

Git storage is provided through the Gitaly service in GitLab, and is essential to the operation of GitLab. When the number of users, repositories, and activity grows, it is important to scale Gitaly appropriately by:

- Increasing the available CPU and memory resources available to Git before resource exhaustion degrades Git, Gitaly, and GitLab application performance.

- Increasing available storage before storage limits are reached causing write operations to fail.

- Removing single points of failure to improve fault tolerance. Git should be considered mission critical if a service degradation would prevent you from deploying changes to production.

Gitaly can be run in a clustered configuration to:

- Scale the Gitaly service.

- Increase fault tolerance.

In this configuration, every Git repository can be stored on multiple Gitaly nodes in the cluster.

Using a Gitaly Cluster increases fault tolerance by:

- Replicating write operations to warm standby Gitaly nodes.

- Detecting Gitaly node failures.

- Automatically routing Git requests to an available Gitaly node.

NOTE: Technical support for Gitaly clusters is limited to GitLab Premium and Ultimate customers.

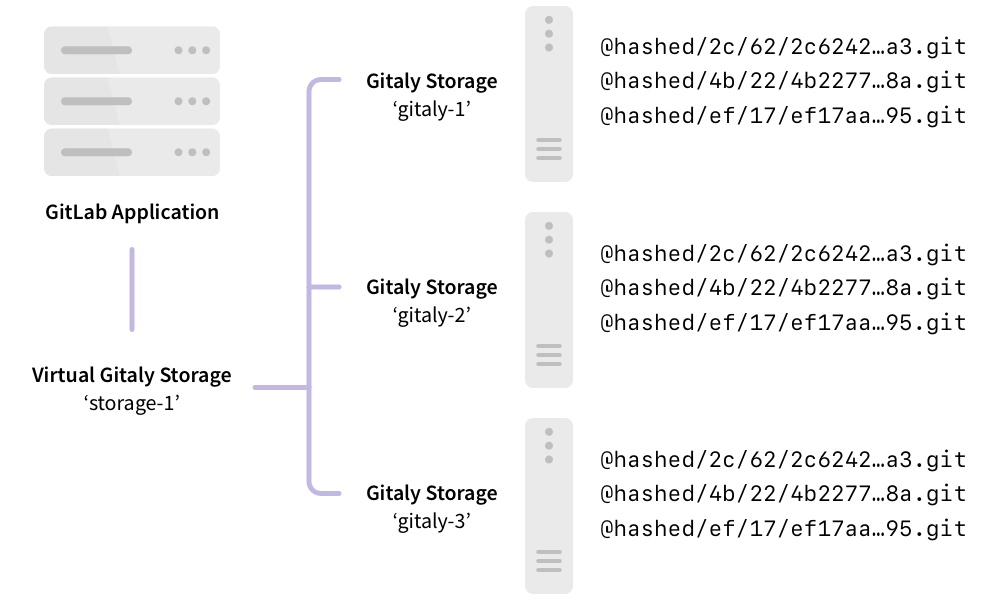

The following shows GitLab set up to access storage-1, a virtual storage provided by Gitaly

Cluster:

In this example:

- Repositories are stored on a virtual storage called

storage-1. - Three Gitaly nodes provide

storage-1access:gitaly-1,gitaly-2, andgitaly-3. - The three Gitaly nodes share data in three separate hashed storage locations.

- The replication factor is

3. Three copies are maintained of each repository.

The availability objectives for Gitaly clusters assuming a single node failure are:

-

Recovery Point Objective (RPO): Less than 1 minute.

Writes are replicated asynchronously. Any writes that have not been replicated to the newly promoted primary are lost.

Strong consistency prevents loss in some circumstances.

-

Recovery Time Objective (RTO): Less than 10 seconds. Outages are detected by a health check run by each Praefect node every second. Failover requires ten consecutive failed health checks on each Praefect node.

Faster outage detection, to improve this speed to less than 1 second, is tracked in this issue.

WARNING: If complete cluster failure occurs, disaster recovery plans should be executed. These can affect the RPO and RTO discussed above.

Comparison to Geo

Gitaly Cluster and Geo both provide redundancy. However the redundancy of:

- Gitaly Cluster provides fault tolerance for data storage and is invisible to the user. Users are not aware when Gitaly Cluster is used.

- Geo provides replication and disaster recovery for an entire instance of GitLab. Users know when they are using Geo for replication. Geo replicates multiple data types, including Git data.

The following table outlines the major differences between Gitaly Cluster and Geo:

| Tool | Nodes | Locations | Latency tolerance | Failover | Consistency | Provides redundancy for |

|---|---|---|---|---|---|---|

| Gitaly Cluster | Multiple | Single | Less than 1 second, ideally single-digit milliseconds | Automatic | Strong | Data storage in Git |

| Geo | Multiple | Multiple | Up to one minute | Manual | Eventual | Entire GitLab instance |

For more information, see:

- Geo use cases.

- Geo architecture.

Virtual storage

Virtual storage makes it viable to have a single repository storage in GitLab to simplify repository management.

Virtual storage with Gitaly Cluster can usually replace direct Gitaly storage configurations. However, this is at the expense of additional storage space needed to store each repository on multiple Gitaly nodes. The benefit of using Gitaly Cluster virtual storage over direct Gitaly storage is:

- Improved fault tolerance, because each Gitaly node has a copy of every repository.

- Improved resource utilization, reducing the need for over-provisioning for shard-specific peak loads, because read loads are distributed across Gitaly nodes.

- Manual rebalancing for performance is not required, because read loads are distributed across Gitaly nodes.

- Simpler management, because all Gitaly nodes are identical.

The number of repository replicas can be configured using a replication factor.

It can be uneconomical to have the same replication factor for all repositories. To provide greater flexibility for extremely large GitLab instances, variable replication factor is tracked in this issue.

As with normal Gitaly storages, virtual storages can be sharded.

Storage layout

WARNING: The storage layout is an internal detail of Gitaly Cluster and is not guaranteed to remain stable between releases. The information here is only for informational purposes and to help with debugging. Performing changes in the repositories directly on the disk is not supported and may lead to breakage or the changes being overwritten.

Gitaly Cluster's virtual storages provide an abstraction that looks like a single storage but actually consists of multiple physical storages. Gitaly Cluster has to replicate each operation to each physical storage. Operations may succeed on some of the physical storages but fail on others.

Partially applied operations can cause problems with other operations and leave the system in a state it can't recover from. To avoid these types of problems, each operation should either fully apply or not apply at all. This property of operations is called atomicity.

GitLab controls the storage layout on the repository storages. GitLab instructs the repository storage where to create, delete, and move repositories. These operations create atomicity issues when they are being applied to multiple physical storages. For example:

- GitLab deletes a repository while one of its replicas is unavailable.

- GitLab later recreates the repository.

As a result, the stale replica that was unavailable at the time of deletion may cause conflicts and prevent recreation of the repository.

These atomicity issues have caused multiple problems in the past with:

- Geo syncing to a secondary site with Gitaly Cluster.

- Backup restoration.

- Repository moves between repository storages.

Gitaly Cluster provides atomicity for these operations by storing repositories on the disk in a special layout that prevents conflicts that could occur due to partially applied operations.

Client-generated replica paths

Repositories are stored in the storages at the relative path determined by the Gitaly client. These paths can be

identified by them not beginning with the @cluster prefix. The relative paths

follow the hashed storage schema.

Praefect-generated replica paths (GitLab 15.0 and later)

Introduced in GitLab 15.0 behind a feature flag named

gitaly_praefect_generated_replica_paths. Disabled by default.

FLAG:

On self-managed GitLab, by default this feature is not available. To make it available, ask an administrator to enable the feature flag

named gitaly_praefect_generated_replica_paths. On GitLab.com, this feature is available but can be configured by GitLab.com administrators only. The feature is not ready for production use.

When Gitaly Cluster creates a repository, it assigns the repository a unique and permanent ID called the repository ID. The repository ID is internal to Gitaly Cluster and doesn't relate to any IDs elsewhere in GitLab. If a repository is removed from Gitaly Cluster and later moved back, the repository is assigned a new repository ID and is a different repository from Gitaly Cluster's perspective. The sequence of repository IDs always increases, but there may be gaps in the sequence.

The repository ID is used to derive a unique storage path called replica path for each repository on the cluster. The replicas of a repository are all stored at the same replica path on the storages. The replica path is distinct from the relative path:

- The relative path is a name the Gitaly client uses to identify a repository, together with its virtual storage, that is unique to them.

- The replica path is the actual physical path in the physical storages.

Praefect translates the repositories in the RPCs from the virtual (virtual storage, relative path) identifier into physical repository

(storage, replica_path) identifier when handling the client requests.

The format of the replica path for:

- Object pools is

@cluster/pools/<xx>/<xx>/<repository ID>. Object pools are stored in a different directory than other repositories. They must be identifiable by Gitaly to avoid pruning them as part of housekeeping. Pruning object pools can cause data loss in the linked repositories. - Other repositories is

@cluster/repositories/<xx>/<xx>/<repository ID>

For example, @cluster/repositories/6f/96/54771.

The last component of the replica path, 54771, is the repository ID. This can be used to identify the repository on the disk.

<xx>/<xx> are the first four hex digits of the SHA256 hash of the string representation of the repository ID. This is used to balance

the repositories evenly into subdirectories to avoid overly large directories that might cause problems on some file

systems. In this case, 54771 hashes to 6f960ab01689464e768366d3315b3d3b2c28f38761a58a70110554eb04d582f7 so the

first four digits are 6f and 96.

Identify repositories on disk

Use the praefect metadata subcommand to:

- Retrieve a repository's virtual storage and relative path from the metadata store. After you have the hashed storage path, you can use the Rails console to retrieve the project path.

- Find where a repository is stored in the cluster with either:

- The virtual storage and relative path.

- The repository ID.

The repository on disk also contains the project path in the Git configuration file. The configuration file can be used to determine the project path even if the repository's metadata has been deleted. Follow the instructions in hashed storage's documentation.

Atomicity of operations

Gitaly Cluster uses the PostgreSQL metadata store with the storage layout to ensure atomicity of repository creation, deletion, and move operations. The disk operations can't be atomically applied across multiple storages. However, PostgreSQL guarantees the atomicity of the metadata operations. Gitaly Cluster models the operations in a manner that the failing operations always leave the metadata consistent. The disks may contain stale state even after successful operations. This is expected and the leftover state won't interfere with future operations but may use up disk space unnecessarily until a clean up is performed.

There is on-going work on a background crawler that cleans up the leftover repositories from the storages.

Repository creations

When creating repositories, Praefect:

- Reserves a repository ID from PostgreSQL. This is atomic and no two creations receive the same ID.

- Creates replicas on the Gitaly storages in the replica path derived from the repository ID.

- Creates metadata records after the repository is successfully created on disk.

Even if two concurrent operations create the same repository, they'd be stored in different directories on the storages and not conflict. The first to complete creates the metadata record and the other operation fails with an "already exists" error. The failing creation leaves leftover repositories on the storages. There is on-going work on a background crawler that clean up the leftover repositories from the storages.

The repository IDs are generated from the repositories_repository_id_seq in PostgreSQL. In the above example, the failing operation took

one repository ID without successfully creating a repository with it. Failed repository creations are expected lead to gaps in the repository IDs.

Repository deletions

A repository is deleted by removing its metadata record. The repository ceases to logically exist as soon as the metadata record is deleted. PostgreSQL guarantees the atomicity of the removal and a concurrent delete fails with a "not found" error. After successfully deleting the metadata record, Praefect attempts to remove the replicas from the storages. This may fail and leave leftover state in the storages. The leftover state is eventually cleaned up.

Repository moves

Unlike Gitaly, Gitaly Cluster doesn't move the repositories in the storages but only virtually moves the repository by updating the relative path of the repository in the metadata store.

Moving beyond NFS

Engineering support for NFS for Git repositories is deprecated. Technical support is planned to be unavailable starting November 22, 2022. Please see our statement of support for more details.

Network File System (NFS) is not well suited to Git workloads which are CPU and IOPS sensitive. Specifically:

- Git is sensitive to file system latency. Some operations require many read operations. Operations that are fast on block storage can become an order of magnitude slower. This significantly impacts GitLab application performance.

- NFS performance optimizations that prevent the performance gap between block storage and NFS being even wider are vulnerable to race conditions. We have observed data inconsistencies in production environments caused by simultaneous writes to different NFS clients. Data corruption is not an acceptable risk.

Gitaly Cluster is purpose built to provide reliable, high performance, fault tolerant Git storage.

Further reading:

- Blog post: The road to Gitaly v1.0 (aka, why GitLab doesn't require NFS for storing Git data anymore)

- Blog post: How we spent two weeks hunting an NFS bug in the Linux kernel

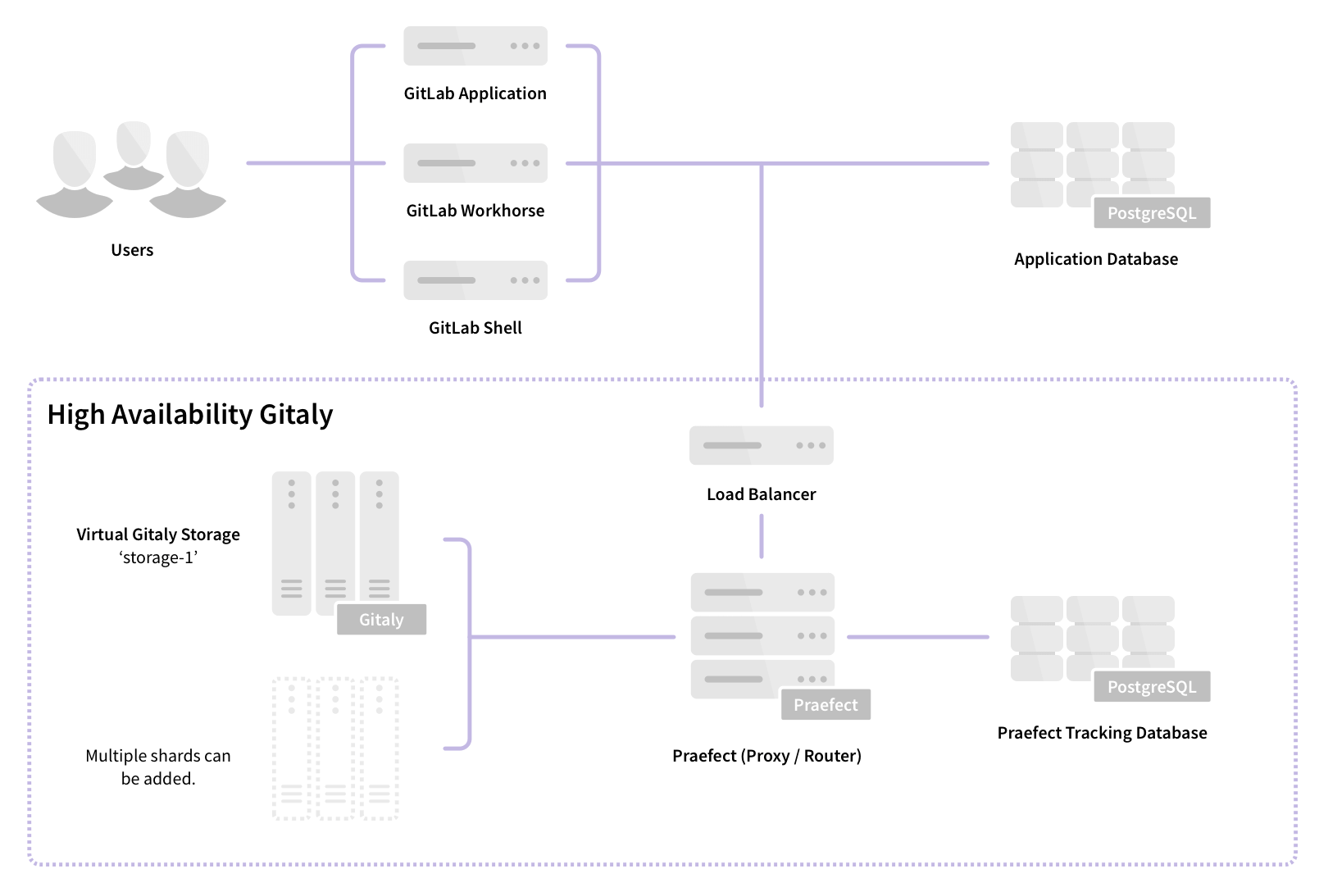

Components

Gitaly Cluster consists of multiple components:

- Load balancer for distributing requests and providing fault-tolerant access to Praefect nodes.

- Praefect nodes for managing the cluster and routing requests to Gitaly nodes.

- PostgreSQL database for persisting cluster metadata and PgBouncer, recommended for pooling Praefect's database connections.

- Gitaly nodes to provide repository storage and Git access.

Architecture

Praefect is a router and transaction manager for Gitaly, and a required component for running a Gitaly Cluster.

For more information, see Gitaly High Availability (HA) Design.

Features

Gitaly Cluster provides the following features:

- Distributed reads among Gitaly nodes.

- Strong consistency of the secondary replicas.

- Replication factor of repositories for increased redundancy.

- Automatic failover from the primary Gitaly node to secondary Gitaly nodes.

- Reporting of possible data loss if replication queue isn't empty.

Follow the Gitaly Cluster epic for improvements including horizontally distributing reads.

Distributed reads

- Introduced in GitLab 13.1 in beta with feature flag

gitaly_distributed_readsset to disabled.- Made generally available and enabled by default in GitLab 13.3.

- Disabled by default in GitLab 13.5.

- Enabled by default in GitLab 13.8.

- Feature flag removed in GitLab 13.11.

Gitaly Cluster supports distribution of read operations across Gitaly nodes that are configured for the virtual storage.

All RPCs marked with the ACCESSOR option are redirected to an up to date and healthy Gitaly node.

For example, GetBlob.

Up to date in this context means that:

- There is no replication operations scheduled for this Gitaly node.

- The last replication operation is in completed state.

The primary node is chosen to serve the request if:

- There are no up to date nodes.

- Any other error occurs during node selection.

You can monitor distribution of reads using Prometheus.

Strong consistency

- Introduced in GitLab 13.1 in alpha, disabled by default.

- Entered beta in GitLab 13.2, disabled by default.

- In GitLab 13.3, disabled unless primary-wins voting strategy is disabled.

- From GitLab 13.4, enabled by default.

- From GitLab 13.5, you must use Git v2.28.0 or higher on Gitaly nodes to enable strong consistency.

- From GitLab 13.6, primary-wins voting strategy and the

gitaly_reference_transactions_primary_winsfeature flag was removed.- From GitLab 14.0, Gitaly Cluster only supports strong consistency, and the

gitaly_reference_transactionsfeature flag was removed.

Gitaly Cluster provides strong consistency by writing changes synchronously to all healthy, up-to-date replicas. If a replica is outdated or unhealthy at the time of the transaction, the write is asynchronously replicated to it.

If strong consistency is unavailable, Gitaly Cluster guarantees eventual consistency. In this case. Gitaly Cluster replicates all writes to secondary Gitaly nodes after the write to the primary Gitaly node has occurred.

Strong consistency:

- Is the primary replication method in GitLab 14.0 and later. A subset of operations still use replication jobs (eventual consistency) instead of strong consistency. Refer to the strong consistency epic for more information.

- Must be configured in GitLab versions 13.1 to 13.12. For configuration information, refer to either:

- Documentation on your GitLab instance at

/help. - The 13.12 documentation.

- Documentation on your GitLab instance at

- Is unavailable in GitLab 13.0 and earlier.

For more information on monitoring strong consistency, see the Gitaly Cluster Prometheus metrics documentation.

Replication factor

Replication factor is the number of copies Gitaly Cluster maintains of a given repository. A higher replication factor:

- Offers better redundancy and distribution of read workload.

- Results in higher storage cost.

By default, Gitaly Cluster replicates repositories to every storage in a virtual storage.

For configuration information, see Configure replication factor.

Configure Gitaly Cluster

For more information on configuring Gitaly Cluster, see Configure Gitaly Cluster.

Upgrade Gitaly Cluster

To upgrade a Gitaly Cluster, follow the documentation for zero downtime upgrades.

Downgrade Gitaly Cluster to a previous version

If you need to roll back a Gitaly Cluster to an earlier version, some Praefect database migrations may need to be reverted. In a cluster with:

- A single Praefect node, this happens when GitLab itself is downgraded.

- Multiple Praefect nodes, additional steps are required.

To downgrade a Gitaly Cluster with multiple Praefect nodes:

-

Stop the Praefect service on all Praefect nodes:

gitlab-ctl stop praefect -

Downgrade the GitLab package to the older version on one of the Praefect nodes.

-

On the downgraded node, check the state of Praefect migrations:

/opt/gitlab/embedded/bin/praefect -config /var/opt/gitlab/praefect/config.toml sql-migrate-status -

Count the number of migrations with

unknown migrationin theAPPLIEDcolumn. -

On a Praefect node that has not been downgraded, perform a dry run of the rollback to validate which migrations to revert.

<CT_UNKNOWN>is the number of unknown migrations reported by the downgraded node./opt/gitlab/embedded/bin/praefect -config /var/opt/gitlab/praefect/config.toml sql-migrate <CT_UNKNOWN> -

If the results look correct, run the same command with the

-foption to revert the migrations:/opt/gitlab/embedded/bin/praefect -config /var/opt/gitlab/praefect/config.toml sql-migrate -f <CT_UNKNOWN> -

Downgrade the GitLab package on the remaining Praefect nodes and start the Praefect service again:

gitlab-ctl start praefect

Migrate to Gitaly Cluster

WARNING: Some known issues exist in Gitaly Cluster. Review the following information before you continue.

Before migrating to Gitaly Cluster:

- Review Before deploying Gitaly Cluster.

- Upgrade to the latest possible version of GitLab, to take advantage of improvements and bug fixes.

To migrate to Gitaly Cluster:

- Create the required storage. Refer to repository storage recommendations.

- Create and configure Gitaly Cluster.

- Move the repositories. To migrate to Gitaly Cluster, existing repositories stored outside Gitaly Cluster must be moved. There is no automatic migration but the moves can be scheduled with the GitLab API.

Even if you don't use the default repository storage, you must ensure it is configured.

Read more about this limitation.

Migrate off Gitaly Cluster

If the limitations and tradeoffs of Gitaly Cluster are found to be not suitable for your environment, you can Migrate off Gitaly Cluster to a sharded Gitaly instance:

- Create and configure a new Gitaly server.

- Move the repositories to the newly created storage. You can move them by shard or by group, which gives you the opportunity to spread them over multiple Gitaly servers.

Do not bypass Gitaly

GitLab doesn't advise directly accessing Gitaly repositories stored on disk with a Git client, because Gitaly is being continuously improved and changed. These improvements may invalidate your assumptions, resulting in performance degradation, instability, and even data loss. For example:

- Gitaly has optimizations such as the

info/refsadvertisement cache, that rely on Gitaly controlling and monitoring access to repositories by using the official gRPC interface. - Gitaly Cluster has optimizations, such as fault tolerance and distributed reads, that depend on the gRPC interface and database to determine repository state.

WARNING: Accessing Git repositories directly is done at your own risk and is not supported.

Direct access to Git in GitLab

Direct access to Git uses code in GitLab known as the "Rugged patches".

Before Gitaly existed, what are now Gitaly clients accessed Git repositories directly, either:

- On a local disk in the case of a single-machine Omnibus GitLab installation.

- Using NFS in the case of a horizontally-scaled GitLab installation.

In addition to running plain git commands, GitLab used a Ruby library called

Rugged. Rugged is a wrapper around

libgit2, a stand-alone implementation of Git in the form of a C library.

Over time it became clear that Rugged, particularly in combination with

Unicorn, is extremely efficient. Because libgit2 is a library and

not an external process, there was very little overhead between:

- GitLab application code that tried to look up data in Git repositories.

- The Git implementation itself.

Because the combination of Rugged and Unicorn was so efficient, the GitLab application code ended up with lots of duplicate Git object lookups. For example, looking up the default branch commit a dozen times in one request. We could write inefficient code without poor performance.

When we migrated these Git lookups to Gitaly calls, we suddenly had a much higher fixed cost per Git

lookup. Even when Gitaly is able to re-use an already-running git process (for example, to look up

a commit), you still have:

- The cost of a network roundtrip to Gitaly.

- Inside Gitaly, a write/read roundtrip on the Unix pipes that connect Gitaly to the

gitprocess.

Using GitLab.com to measure, we reduced the number of Gitaly calls per request until the loss of Rugged's efficiency was no longer felt. It also helped that we run Gitaly itself directly on the Git file servers, rather than by using NFS mounts. This gave us a speed boost that counteracted the negative effect of not using Rugged anymore.

Unfortunately, other deployments of GitLab could not remove NFS like we did on GitLab.com, and they got the worst of both worlds:

- The slowness of NFS.

- The increased inherent overhead of Gitaly.

The code removed from GitLab during the Gitaly migration project affected these deployments. As a performance workaround for these NFS-based deployments, we re-introduced some of the old Rugged code. This re-introduced code is informally referred to as the "Rugged patches".

How it works

The Ruby methods that perform direct Git access are behind feature flags, disabled by default. It wasn't convenient to set feature flags to get the best performance, so we added an automatic mechanism that enables direct Git access.

When GitLab calls a function that has a "Rugged patch", it performs two checks:

- Is the feature flag for this patch set in the database? If so, the feature flag setting controls the GitLab use of "Rugged patch" code.

- If the feature flag is not set, GitLab tries accessing the file system underneath the

Gitaly server directly. If it can, it uses the "Rugged patch":

- If using Puma and thread count is set

to

1.

- If using Puma and thread count is set

to

The result of these checks is cached.

To see if GitLab can access the repository file system directly, we use the following heuristic:

- Gitaly ensures that the file system has a metadata file in its root with a UUID in it.

- Gitaly reports this UUID to GitLab by using the

ServerInfoRPC. - GitLab Rails tries to read the metadata file directly. If it exists, and if the UUID's match, assume we have direct access.

Direct Git access is enable by default in Omnibus GitLab because it fills in the correct repository

paths in the GitLab configuration file config/gitlab.yml. This satisfies the UUID check.

Transition to Gitaly Cluster

For the sake of removing complexity, we must remove direct Git access in GitLab. However, we can't remove it as long some GitLab installations require Git repositories on NFS.

There are two facets to our efforts to remove direct Git access in GitLab:

- Reduce the number of inefficient Gitaly queries made by GitLab.

- Persuade administrators of fault-tolerant or horizontally-scaled GitLab instances to migrate off NFS.

The second facet presents the only real solution. For this, we developed Gitaly Cluster.

NFS deprecation notice

Engineering support for NFS for Git repositories is deprecated. Technical support is planned to be unavailable beginning November 22, 2022. For further information, please see our NFS Deprecation documentation.