18 KiB

| stage | group | info | type |

|---|---|---|---|

| Verify | Pipeline Execution | To determine the technical writer assigned to the Stage/Group associated with this page, see https://about.gitlab.com/handbook/engineering/ux/technical-writing/#assignments | index, concepts, howto |

Caching in GitLab CI/CD

A cache is one or more files that a job downloads and saves. Subsequent jobs that use the same cache don't have to download the files again, so they execute more quickly.

To learn how to define the cache in your .gitlab-ci.yml file,

see the cache reference.

How cache is different from artifacts

Use cache for dependencies, like packages you download from the internet. Cache is stored where GitLab Runner is installed and uploaded to S3 if distributed cache is enabled.

- You can define it per job by using the

cache:keyword. Otherwise it is disabled. - You can define it per job so that:

- Subsequent pipelines can use it.

- Subsequent jobs in the same pipeline can use it, if the dependencies are identical.

- You cannot share it between projects.

Use artifacts to pass intermediate build results between stages. Artifacts are generated by a job, stored in GitLab, and can be downloaded.

- You can define artifacts per job. Subsequent jobs in later stages of the same pipeline can use them.

- You can't use the artifacts in a different pipeline.

Artifacts expire after 30 days unless you define an expiration time. Use dependencies to control which jobs fetch the artifacts.

Both artifacts and caches define their paths relative to the project directory, and can't link to files outside it.

Good caching practices

To ensure maximum availability of the cache, when you declare cache in your jobs,

use one or more of the following:

- Tag your runners and use the tag on jobs that share their cache.

- Use runners that are only available to a particular project.

- Use a

keythat fits your workflow (for example, different caches on each branch). For that, you can take advantage of the predefined CI/CD variables.

For runners to work with caches efficiently, you must do one of the following:

- Use a single runner for all your jobs.

- Use multiple runners (in autoscale mode or not) that use distributed caching, where the cache is stored in S3 buckets (like shared runners on GitLab.com).

- Use multiple runners (not in autoscale mode) of the same architecture that share a common network-mounted directory (using NFS or something similar) where the cache is stored.

Read about the availability of the cache to learn more about the internals and get a better idea how cache works.

Share caches between jobs in the same branch

Define a cache with the key: ${CI_COMMIT_REF_SLUG} so that jobs of each

branch always use the same cache:

cache:

key: ${CI_COMMIT_REF_SLUG}

This configuration is safe from accidentally overwriting the cache, but merge requests get slow first pipelines. The next time a new commit is pushed to the branch, the cache is re-used and jobs run faster.

To enable per-job and per-branch caching:

cache:

key: "$CI_JOB_NAME-$CI_COMMIT_REF_SLUG"

To enable per-stage and per-branch caching:

cache:

key: "$CI_JOB_STAGE-$CI_COMMIT_REF_SLUG"

Share caches across jobs in different branches

To share a cache across all branches and all jobs, use the same key for everything:

cache:

key: one-key-to-rule-them-all

To share caches between branches, but have a unique cache for each job:

cache:

key: ${CI_JOB_NAME}

Disable cache for specific jobs

If you have defined the cache globally, it means that each job uses the same definition. You can override this behavior per-job, and if you want to disable it completely, use an empty hash:

job:

cache: {}

Inherit global configuration, but override specific settings per job

You can override cache settings without overwriting the global cache by using

anchors. For example, if you want to override the

policy for one job:

cache: &global_cache

key: ${CI_COMMIT_REF_SLUG}

paths:

- node_modules/

- public/

- vendor/

policy: pull-push

job:

cache:

# inherit all global cache settings

<<: *global_cache

# override the policy

policy: pull

For more fine tuning, read also about the

cache: policy.

Common use cases

The most common use case of caching is to avoid downloading content like dependencies or libraries repeatedly between subsequent runs of jobs. Node.js packages, PHP packages, Ruby gems, Python libraries, and others can all be cached.

For more examples, check out our GitLab CI/CD templates.

Cache Node.js dependencies

If your project is using npm to install the Node.js

dependencies, the following example defines cache globally so that all jobs inherit it.

By default, npm stores cache data in the home folder ~/.npm but you

can't cache things outside of the project directory.

Instead, we tell npm to use ./.npm, and cache it per-branch:

#

# https://gitlab.com/gitlab-org/gitlab/-/tree/master/lib/gitlab/ci/templates/Nodejs.gitlab-ci.yml

#

image: node:latest

# Cache modules in between jobs

cache:

key: ${CI_COMMIT_REF_SLUG}

paths:

- .npm/

before_script:

- npm ci --cache .npm --prefer-offline

test_async:

script:

- node ./specs/start.js ./specs/async.spec.js

Cache PHP dependencies

Assuming your project is using Composer to install

the PHP dependencies, the following example defines cache globally so that

all jobs inherit it. PHP libraries modules are installed in vendor/ and

are cached per-branch:

#

# https://gitlab.com/gitlab-org/gitlab/-/tree/master/lib/gitlab/ci/templates/PHP.gitlab-ci.yml

#

image: php:7.2

# Cache libraries in between jobs

cache:

key: ${CI_COMMIT_REF_SLUG}

paths:

- vendor/

before_script:

# Install and run Composer

- curl --show-error --silent "https://getcomposer.org/installer" | php

- php composer.phar install

test:

script:

- vendor/bin/phpunit --configuration phpunit.xml --coverage-text --colors=never

Cache Python dependencies

Assuming your project is using pip to install

the Python dependencies, the following example defines cache globally so that

all jobs inherit it. Python libraries are installed in a virtual environment under venv/,

pip's cache is defined under .cache/pip/ and both are cached per-branch:

#

# https://gitlab.com/gitlab-org/gitlab/-/tree/master/lib/gitlab/ci/templates/Python.gitlab-ci.yml

#

image: python:latest

# Change pip's cache directory to be inside the project directory since we can

# only cache local items.

variables:

PIP_CACHE_DIR: "$CI_PROJECT_DIR/.cache/pip"

# Pip's cache doesn't store the python packages

# https://pip.pypa.io/en/stable/reference/pip_install/#caching

#

# If you want to also cache the installed packages, you have to install

# them in a virtualenv and cache it as well.

cache:

paths:

- .cache/pip

- venv/

before_script:

- python -V # Print out python version for debugging

- pip install virtualenv

- virtualenv venv

- source venv/bin/activate

test:

script:

- python setup.py test

- pip install flake8

- flake8 .

Cache Ruby dependencies

Assuming your project is using Bundler to install the

gem dependencies, the following example defines cache globally so that all

jobs inherit it. Gems are installed in vendor/ruby/ and are cached per-branch:

#

# https://gitlab.com/gitlab-org/gitlab/-/tree/master/lib/gitlab/ci/templates/Ruby.gitlab-ci.yml

#

image: ruby:2.6

# Cache gems in between builds

cache:

key: ${CI_COMMIT_REF_SLUG}

paths:

- vendor/ruby

before_script:

- ruby -v # Print out ruby version for debugging

- bundle install -j $(nproc) --path vendor/ruby # Install dependencies into ./vendor/ruby

rspec:

script:

- rspec spec

If you have jobs that each need a different selection of gems, use the prefix

keyword in the global cache definition. This configuration generates a different

cache for each job.

For example, a testing job might not need the same gems as a job that deploys to production:

cache:

key:

files:

- Gemfile.lock

prefix: ${CI_JOB_NAME}

paths:

- vendor/ruby

test_job:

stage: test

before_script:

- bundle install --without production --path vendor/ruby

script:

- bundle exec rspec

deploy_job:

stage: production

before_script:

- bundle install --without test --path vendor/ruby

script:

- bundle exec deploy

Cache Go dependencies

Assuming your project is using Go Modules to install

Go dependencies, the following example defines cache in a go-cache template, that

any job can extend. Go modules are installed in ${GOPATH}/pkg/mod/ and

are cached for all of the go projects:

.go-cache:

variables:

GOPATH: $CI_PROJECT_DIR/.go

before_script:

- mkdir -p .go

cache:

paths:

- .go/pkg/mod/

test:

image: golang:1.13

extends: .go-cache

script:

- go test ./... -v -short

Availability of the cache

Caching is an optimization, but it isn't guaranteed to always work. You need to be prepared to regenerate any cached files in each job that needs them.

After you have defined a cache in .gitlab-ci.yml,

the availability of the cache depends on:

- The runner's executor type

- Whether different runners are used to pass the cache between jobs.

Where the caches are stored

The runner is responsible for storing the cache, so it's essential

to know where it's stored. All the cache paths defined under a job in

.gitlab-ci.yml are archived in a single cache.zip file and stored in the

runner's configured cache location. By default, they are stored locally in the

machine where the runner is installed and depends on the type of the executor.

| GitLab Runner executor | Default path of the cache |

|---|---|

| Shell | Locally, stored under the gitlab-runner user's home directory: /home/gitlab-runner/cache/<user>/<project>/<cache-key>/cache.zip. |

| Docker | Locally, stored under Docker volumes: /var/lib/docker/volumes/<volume-id>/_data/<user>/<project>/<cache-key>/cache.zip. |

| Docker machine (autoscale runners) | Behaves the same as the Docker executor. |

If you use cache and artifacts to store the same path in your jobs, the cache might be overwritten because caches are restored before artifacts.

How archiving and extracting works

This example has two jobs that belong to two consecutive stages:

stages:

- build

- test

before_script:

- echo "Hello"

job A:

stage: build

script:

- mkdir vendor/

- echo "build" > vendor/hello.txt

cache:

key: build-cache

paths:

- vendor/

after_script:

- echo "World"

job B:

stage: test

script:

- cat vendor/hello.txt

cache:

key: build-cache

paths:

- vendor/

If you have one machine with one runner installed, and all jobs for your project run on the same host:

- Pipeline starts.

job Aruns.before_scriptis executed.scriptis executed.after_scriptis executed.cacheruns and thevendor/directory is zipped intocache.zip. This file is then saved in the directory based on the runner's setting and thecache: key.job Bruns.- The cache is extracted (if found).

before_scriptis executed.scriptis executed.- Pipeline finishes.

By using a single runner on a single machine, you don't have the issue where

job B might execute on a runner different from job A. This setup guarantees the

cache can be reused between stages. It only works if the execution goes from the build stage

to the test stage in the same runner/machine. Otherwise, the cache might not be available.

During the caching process, there's also a couple of things to consider:

- If some other job, with another cache configuration had saved its cache in the same zip file, it is overwritten. If the S3 based shared cache is used, the file is additionally uploaded to S3 to an object based on the cache key. So, two jobs with different paths, but the same cache key, overwrites their cache.

- When extracting the cache from

cache.zip, everything in the zip file is extracted in the job's working directory (usually the repository which is pulled down), and the runner doesn't mind if the archive ofjob Aoverwrites things in the archive ofjob B.

It works this way because the cache created for one runner often isn't valid when used by a different one. A different runner may run on a different architecture (for example, when the cache includes binary files). Also, because the different steps might be executed by runners running on different machines, it is a safe default.

Cache mismatch

In the following table, you can see some reasons where you might hit a cache mismatch and a few ideas how to fix it.

| Reason of a cache mismatch | How to fix it |

|---|---|

| You use multiple standalone runners (not in autoscale mode) attached to one project without a shared cache | Use only one runner for your project or use multiple runners with distributed cache enabled |

| You use runners in autoscale mode without a distributed cache enabled | Configure the autoscale runner to use a distributed cache |

| The machine the runner is installed on is low on disk space or, if you've set up distributed cache, the S3 bucket where the cache is stored doesn't have enough space | Make sure you clear some space to allow new caches to be stored. There's no automatic way to do this. |

You use the same key for jobs where they cache different paths. |

Use different cache keys to that the cache archive is stored to a different location and doesn't overwrite wrong caches. |

Let's explore some examples.

Examples

Let's assume you have only one runner assigned to your project, so the cache is stored in the runner's machine by default.

Two jobs could cause caches to be overwritten if they have the same cache key, but they cache a different path:

stages:

- build

- test

job A:

stage: build

script: make build

cache:

key: same-key

paths:

- public/

job B:

stage: test

script: make test

cache:

key: same-key

paths:

- vendor/

job Aruns.public/is cached as cache.zip.job Bruns.- The previous cache, if any, is unzipped.

vendor/is cached as cache.zip and overwrites the previous one.- The next time

job Aruns it uses the cache ofjob Bwhich is different and thus isn't effective.

To fix that, use different keys for each job.

In another case, let's assume you have more than one runner assigned to your

project, but the distributed cache is not enabled. The second time the

pipeline is run, we want job A and job B to re-use their cache (which in this case

is different):

stages:

- build

- test

job A:

stage: build

script: build

cache:

key: keyA

paths:

- vendor/

job B:

stage: test

script: test

cache:

key: keyB

paths:

- vendor/

Even if the key is different, the cached files might get "cleaned" before each

stage if the jobs run on different runners in the subsequent pipelines.

Clearing the cache

Runners use cache to speed up the execution of your jobs by reusing existing data. This however, can sometimes lead to an inconsistent behavior.

To start with a fresh copy of the cache, there are two ways to do that.

Clearing the cache by changing cache:key

All you have to do is set a new cache: key in your .gitlab-ci.yml. In the

next run of the pipeline, the cache is stored in a different location.

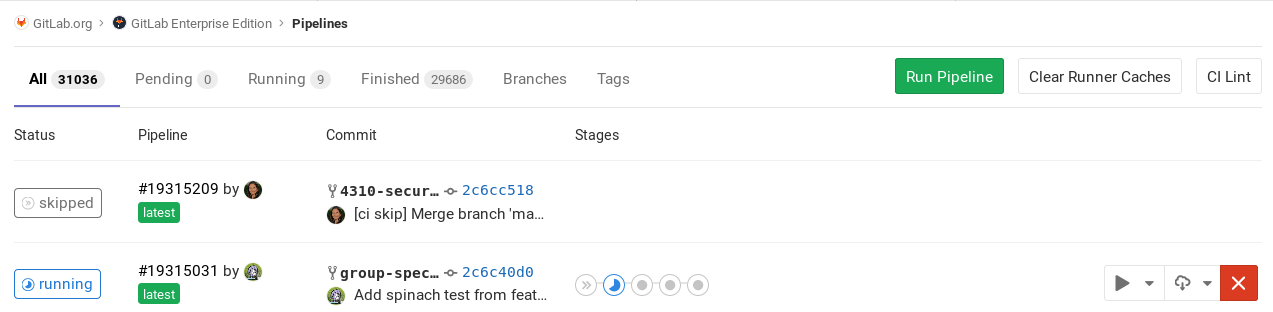

Clearing the cache manually

Introduced in GitLab 10.4.

If you want to avoid editing .gitlab-ci.yml, you can clear the cache

via the GitLab UI:

-

Navigate to your project's CI/CD > Pipelines page.

-

Click on the Clear runner caches button to clean up the cache.

-

On the next push, your CI/CD job uses a new cache.

NOTE:

Each time you clear the cache manually, the internal cache name is updated. The name uses the format cache-<index>, and the index increments by one each time. The old cache is not deleted. You can manually delete these files from the runner storage.